Key takeaways:

- AI is expanding the attack surface inside workflows, not just infrastructure, making traditional security visibility incomplete.

- Claude Mythos highlights a shift where risk comes from model behavior, prompts, and integrations, not just system vulnerabilities.

- Most enterprise AI risks do not start as breaches, they begin as small gaps in prompts, access control, or output validation.

- Faster AI adoption without governance is increasing both the likelihood and cost of security incidents.

- Traditional cybersecurity frameworks struggle because AI systems are dynamic and influenced by real-time inputs.

- Secure AI requires system-level controls across architecture, governance, monitoring, and compliance, not isolated security tools.

Enterprise AI adoption is no longer happening in controlled pockets. It is being wired directly into production systems, sometimes faster than security teams can fully map the risks. What started as pilot experimentation has moved into customer-facing workflows, automated decisions, and backend orchestration layers that touch sensitive data every minute of the day.

Security teams are beginning to see the cost of that speed. According to the IBM Cost of a Data Breach Report, organizations that extensively use AI and automation in their security programs reduce breach costs by $1.9 million on average. At the same time, the report highlights that AI adoption is outpacing governance, making ungoverned AI systems more likely to be breached and more costly when they are.

Most enterprises today are integrating generative models through APIs, orchestration layers, and middleware connectors that were originally built for predictable systems. AI systems are not predictable in the same way. They accept dynamic inputs, interact with external services, and often rely on third-party model ecosystems that sit outside traditional trust boundaries. Each integration point adds another surface where data can move in ways security teams did not originally design for.

The conversations emerging around Claude Mythos reflect this exact shift. Not as theory, and not as speculation, but as a recognition that modern AI deployments behave differently from legacy software systems. Prompt inputs can influence system logic. Model dependencies introduce supply chain risk. External inference endpoints expand the perimeter far beyond internal infrastructure.

In many transformation programs, AI capabilities are added while governance frameworks are still being drafted. That sequence creates blind spots. Sensitive prompts pass through unsecured channels. Identity tokens get reused across services. Logs capture model interactions without clear classification boundaries. None of these failures happen in isolation. They compound quietly until something breaks.

Claude Mythos and cybersecurity risk are no longer theoretical concerns. They represent operational vulnerabilities that enterprises must actively manage.

Reduce AI Security Risk by 4X

Identify hidden gaps across prompts, APIs, and model workflows before they turn into real exposure.

What Is Claude Mythos and Why It Matters for Enterprise Security

Claude Mythos began surfacing in enterprise security conversations as teams noticed failures that did not match traditional cyber security measures for businesses. Systems were not crashing or getting overtly breached. Instead, models were responding to crafted prompts, exposing restricted context, or altering workflow behavior through manipulated inputs passed via APIs and orchestration layers.

What triggered concern was the shift from deterministic software to probabilistic systems. Traditional applications execute fixed logic paths. AI systems interpret dynamic inputs, often sourced from external users, datasets, or integrated services. When validation controls, role permissions, or prompt filtering layers are incomplete, models can produce outputs that technically function but still violate security intent.

This is where Claude Mythos diverges from conventional cybersecurity threats. Legacy defenses focus on perimeter controls and known exploit signatures. AI-driven environments introduce behavioral risks across inference endpoints, model pipelines, and third-party dependencies. Each integration point expands the attack surface in ways traditional monitoring tools were not designed to detect.

At its core, Claude Mythos reflects a simple reality. AI systems behave unpredictably when guardrails are weak. In enterprise environments, that unpredictability does not remain isolated. It propagates through connected systems, turning small configuration gaps into measurable security exposure.

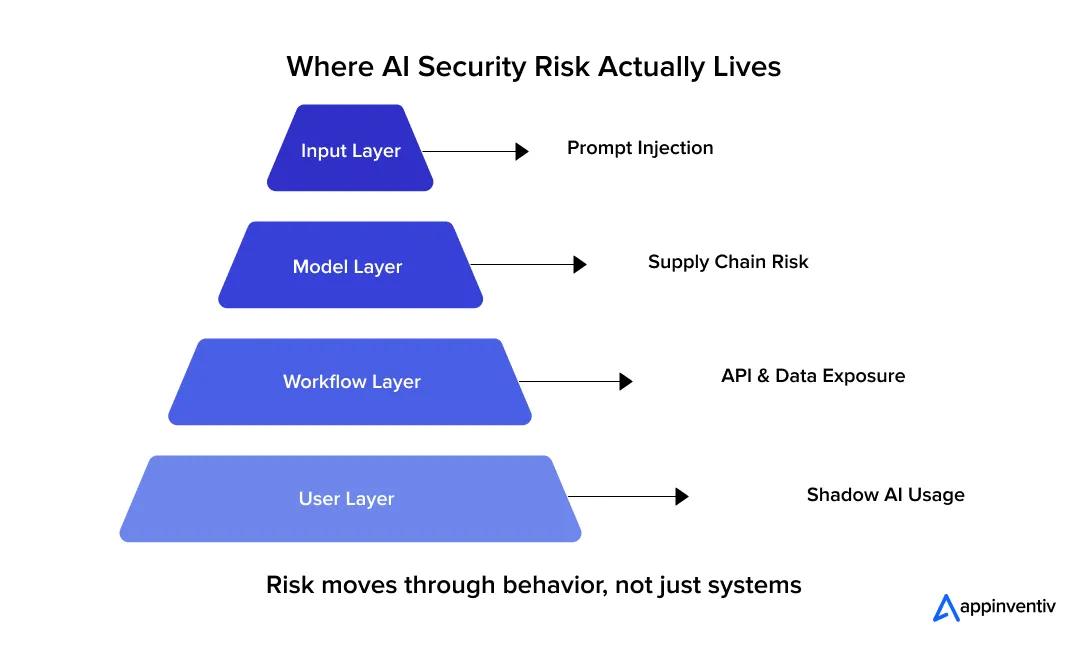

How Claude Mythos Increases Enterprise Attack Surface Risk

Claude Mythos reflects a shift in where enterprise risk originates. Traditional systems expand the attack surface through infrastructure growth such as new devices, users, or network endpoints. AI systems expand it through behavior. Every prompt, model dependency, and integration introduces a new pathway that attackers can influence.

Most enterprise AI integrations are deeply connected to APIs, internal knowledge bases, and workflow engines. That connectivity increases exposure across identity systems, data pipelines, and external services. The risk is rarely visible at the network edge. It often appears inside the logic layer where models interpret inputs and generate responses.

Prompt Injection Vulnerabilities

Prompt injection targets the input layer rather than the code itself. Attackers craft instructions that override intended logic or bypass restrictions.

This creates exposure when models are connected to internal systems or sensitive datasets.

Key risks include:

- Manipulated inputs altering workflow decisions

- Data exfiltration from connected knowledge bases

- Unauthorized system behavior triggered through hidden instructions

Even when infrastructure appears secure, poorly filtered prompts can cause models to expose restricted information without triggering conventional alerts.

Model Supply Chain Risks

Enterprise AI systems depend on third-party models, pre-trained components, and shared datasets. Each dependency introduces trust boundaries that may not be fully verified.

Risks commonly appear through:

- Third-party model dependency without validation controls

- Pre-trained model tampering before deployment

- Dataset poisoning that alters model behavior gradually

Unlike traditional vulnerabilities, these risks do not always cause visible system failure. They influence model outputs under specific conditions, making detection slower and remediation more complex.

Shadow AI and Unauthorized Usage

Shadow AI occurs when employees use external AI tools outside approved enterprise infrastructure. This risk is growing as generative tools become easier to access.

Typical exposure paths include:

- Employees submitting sensitive business data to public models

- Unapproved integrations linking internal workflows to external tools

- Data leakage through prompts stored outside enterprise control

Because these activities bypass monitoring systems, organizations often discover the risk only after sensitive information has already been exposed. That’s why organizations need to implement generative AI security measures.

Key Cybersecurity Risks Enterprises Are Already Seeing From Claude Mythos

The risks linked to Claude Mythos are not abstract. They show up inside working systems, often in places teams did not originally treat as security boundaries. Most incidents do not begin with visible compromise. They begin with small behavior gaps, an unexpected output, a reused token, or a prompt that exposes more context than intended. Over time, those gaps turn into operational risk.

What makes these risks harder to manage is that they sit inside application logic, not just infrastructure. Firewalls and endpoint tools still matter, but many failures now originate inside model workflows, API interactions, and automated decision layers.

Data Leakage Through AI Interfaces

Data leakage through AI systems rarely looks like a traditional breach. There is no obvious intrusion. Instead, sensitive information moves through prompts, logs, or model responses in ways teams did not fully anticipate.

Many enterprise deployments rely on external inference services or shared infrastructure. That creates situations where:

- Sensitive prompts are stored outside enterprise-controlled environments

- Logs capture confidential data that was never meant to persist

- Cross-tenant exposure becomes possible in shared model environments

A typical example is an internal assistant connected to a knowledge base. Under normal use, it retrieves only approved content. But if prompt boundaries are weak, carefully structured queries can force the system to reveal internal documentation fragments that should never leave restricted scopes. The system continues functioning, which is why these leaks often go unnoticed at first.

Model Hallucinations and False Outputs

Hallucinations are often dismissed as accuracy problems. In enterprise environments, they become operational risks.

When models sit inside automated pipelines, their outputs do not stay theoretical. They trigger actions. A flawed recommendation can pass into a workflow engine, update records, or generate reports that teams assume are correct.

Real-world impact usually shows up as:

- Incorrect automated decisions affecting downstream systems

- False outputs entering financial or compliance workflows

- Inconsistent data appearing in customer-facing responses

The problem gets worse when there is no validation layer between model output and system execution. In regulated industries, even a small output error can create reporting discrepancies that surface weeks later during audits.

AI-Driven Social Engineering Risks

Social engineering has always relied on trust. AI simply makes it faster and more convincing.

Attackers no longer need strong technical skills to craft believable messages. They can generate emails that match company tone, create voice recordings that sound familiar, or simulate video instructions that appear legitimate.

Enterprises are already seeing:

- AI-generated phishing emails tailored to specific teams

- Deepfake voice messages impersonating executives

- Automated conversations that adapt in real time to responses

These attacks rarely target infrastructure first. They target people and once trust is broken, attackers gain access through legitimate channels, making detection harder and response slower.

Why Traditional Cybersecurity Frameworks Fall Short for AI Systems

Traditional cybersecurity frameworks were designed for predictable infrastructure such as servers, endpoints, and network traffic. AI systems behave differently. They process dynamic inputs, interact with external services, and generate outputs that influence downstream workflows. A request may appear legitimate at the network level but still trigger unintended model behavior.

This mismatch creates blind spots. Legacy controls protect infrastructure, but AI risk often lives inside model interactions, prompt handling, and automated decisions.

Where Traditional Controls Struggle in AI Environments

| Traditional Security Layer | What It Was Designed For | Where It Struggles With AI Systems |

|---|---|---|

| Legacy Firewalls | Blocking unauthorized network traffic | Cannot detect malicious prompt logic inside valid requests |

| Static Threat Detection | Identifying known attack signatures | Fails to detect prompt injection or data inference risks |

| Traditional SIEM Systems | Correlating structured logs and alerts | Misses behavioral anomalies inside model workflows |

| Access Controls | Managing user permissions | Struggles with service tokens and model-level permissions |

The Core Limitation

AI systems are dynamic systems, not static infrastructure. Their behavior depends on runtime inputs, model context, and external integrations. That means risks can emerge even when network traffic looks normal and authentication succeeds.

Without setting AI guardrails that control prompt validation, behavior monitoring, and model-aware logging, traditional frameworks may confirm that systems are running securely while missing the actual exposure inside AI-driven workflows.

How to Design Secure AI Architectures That Contain Claude Mythos Risks

Most organizations do not run into AI security problems because they lack tools. They run into them because models get connected into production systems before the control layers are fully defined. Once APIs, data stores, and workflow engines are linked, small configuration shortcuts tend to stay in place longer than expected.

Reducing Claude Mythos risk is less about adding security products and more about shaping how the system is wired together. In practice, secure AI environments are layered systems. Data enters through controlled interfaces, models run inside bounded execution paths, and outputs are inspected before they influence downstream actions. When one layer weakens, another should still contain the impact.

Core Architecture Controls at a Glance

| Architecture Layer | Why It Matters | Typical Controls Used |

|---|---|---|

| Model Lifecycle | Tracks behavior changes over time | Versioning, drift alerts, dataset validation |

| Prompt Layer | Controls how instructions enter the model | Filtering, context scoping, instruction isolation |

| Identity Layer | Limits system-level access | RBAC, token rotation, service isolation |

| Output Layer | Prevents unsafe responses from executing | Response checks, command validation |

| Workflow Layer | Contains lateral movement | Zero Trust segmentation |

Secure Model Lifecycle Management

Models change more often than most traditional software components. Fine-tuning LLMs, retraining cycles, and dataset refreshes can alter behavior in ways that are hard to detect without visibility controls.

Teams that manage this well usually implement:

- Model version tracking tied to deployment artifacts and audit logs

- Drift monitoring that flags unexpected shifts in output behavior

- Controlled retraining pipelines that scan datasets before ingestion

Without lifecycle discipline, it becomes difficult to answer a basic incident question: which model version generated this output, and what data influenced it.

Prompt Security and Input Validation Layers

Prompt handling sits closer to the risk boundary than most teams initially expect. Every incoming request carries instructions that influence how the model behaves.

In production systems, prompt security usually involves:

- Input filtering to detect hidden or chained instructions

- Context scoping to restrict access to sensitive sources

- Instruction separation between user input and system directives

This layer typically lives between the application interface and the inference engine. If it is skipped or simplified, prompt injection becomes easier to execute without triggering infrastructure alarms.

Identity-Aware Model Access Control

AI workflows often rely on service accounts that connect models to storage, APIs, and internal platforms. Those identities tend to accumulate permissions over time.

Risk usually builds through small shortcuts, such as:

- Broad service permissions left in place after testing

- Reusable tokens shared across multiple environments

- Weak authentication layers protecting inference endpoints

Strong identity isolation helps contain failures when credentials are exposed or misused.

Output Monitoring and Response Validation

Many systems validate inputs but assume outputs are safe. That assumption creates risk when model responses trigger automated actions.

In mature environments, output controls typically include:

- Response inspection rules to detect restricted data exposure

- Execution safeguards that prevent unsafe commands from running

- Logging pipelines that capture output behavior for audit review

These checks add processing overhead, but they often prevent the type of silent failures that surface weeks later during audits.

Zero Trust Segmentation for AI Workflows

AI systems cross multiple trust boundaries. Models retrieve data, interact with APIs, and trigger downstream processes. Assuming internal systems are trustworthy is no longer sufficient.

Zero Trust patterns in AI environments usually involve:

- Segmented execution layers separating models from core data systems

- Continuous verification before allowing service-to-service communication

- Least-privilege access applied to model dependencies

This approach limits how far a compromised component can move across the system.

Not sure where your AI system might be exposed?

See how we secure enterprise systems before risks scale.

When Claude Mythos Risks Turn Into Compliance Exposure

Claude Mythos risks not staying technical for long. In enterprise environments, they surface as compliance gaps the moment regulated data enters AI workflows. Once models interact with sensitive data, prompts and outputs become part of the audit surface.

Why Traditional Compliance Assumptions Break

Most compliance frameworks were built for predictable systems. They assume fixed workflows, structured inputs, and controlled outputs.

AI systems behave differently. They generate responses dynamically, pull context at runtime, and often rely on external services. This makes it harder to track where data flows and how it is used, which creates gaps during audits.

Where Data Exposure Typically Happens

In practice, compliance issues show up in a few common places:

- Prompts containing sensitive data stored in logs or external systems

- Model outputs referencing restricted or regulated information

- API interactions moving data across systems without clear boundaries

These are not system failures. They are control gaps that become visible during audits.

Impact Across Key Frameworks

Regulatory frameworks already expect strong data control, even if they do not explicitly mention AI.

- GDPR requires strict handling of personal data, which can be exposed through prompts or responses

- HIPAA applies when patient data appears in model interactions or logs

- SOC 2 focuses on access control and monitoring, which weakens with poor token and identity management

- ISO 27001 requires governance over data flow and system behavior

- NIST emphasizes risk modeling, which becomes harder when systems behave dynamically

The Core Shift

Claude Mythos highlights a simple but important change. AI risk becomes compliance risk as soon as regulated data enters the system.

What looks like a minor configuration issue, such as weak prompt filtering or broad access permissions, can quickly turn into an audit finding or reportable incident once data moves outside expected boundaries.

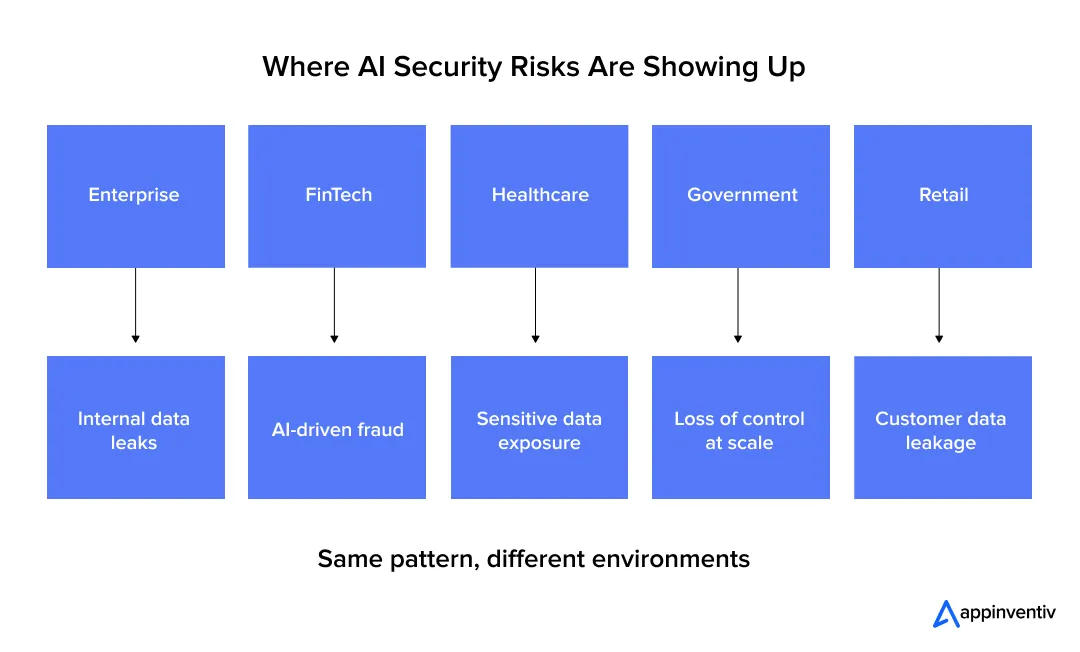

Where Claude Mythos Risks Are Already Showing Up Across Industries

Claude Mythos risks are already visible in real enterprise environments. The pattern is consistent across industries. AI does not fail like traditional systems. It introduces exposure through prompts, outputs, and integrations that operate inside normal workflows.

Recent 2026 research shows this is no longer edge-case behavior. 68% of organizations have already experienced data leaks linked to AI usage, yet only 23% have formal governance policies in place.

Enterprise / Technology (Cross-Industry)

The most widely known real-world incident remains the internal data leak at Samsung.

- Employees uploaded confidential code and internal documents into ChatGPT

- The data was shared during routine engineering workflows

- The organization later restricted AI tool usage

This incident is still referenced in 2026 security analysis as a baseline example of prompt-driven data leakage, not a breach.

The key takeaway is simple. Data left the system through normal usage, not through an attack.

FinTech and Banking

In financial environments, the risk shows up in two areas: automation errors and AI-driven fraud vectors.

Recent 2026 reports highlight that:

- AI-generated phishing attacks are increasing rapidly

- Financial institutions are among the primary targets

- AI is being used to craft highly convincing fraud campaigns

Phishing attacks targeting financial systems have surged dramatically, with AI enabling personalization and scale beyond traditional methods.

This shifts risk from infrastructure compromise to behavioral manipulation and decision-layer failure.

Healthcare

Healthcare environments are especially sensitive because AI systems often interact with protected patient data.

Modern research shows that:

- AI systems can expose or infer sensitive information through prompts

- Data leakage does not require system compromise

- Model behavior under specific inputs can reveal restricted context

Recent enterprise-focused AI research highlights that employees unintentionally sharing confidential data and models generating policy-violating outputs remain primary risks in production environments.

In healthcare, this translates directly into compliance exposure under regulations like HIPAA.

Government and Public Sector

Public sector risk is less about breach events and more about systemic exposure and misuse.

The 2026 International AI Safety Report identifies three key risk categories:

- Malicious use (scams, cyberattacks, disinformation)

- Technical failures (unpredictable outputs, unsafe recommendations)

- Systemic risks (dependency on external AI systems)

These risks become critical in government environments where AI systems interact with sensitive or classified data.

The concern is not just data exposure, but loss of control over how AI systems behave at scale.

Retail and Customer Platforms

Retail systems rely heavily on AI for personalization and customer interaction. The risk appears when models process large volumes of user data in real time.

Recent 2026 security analysis highlights:

- Customer data leakage through AI interfaces

- Prompt-driven exposure of user information

- Misconfigured APIs leading to unintended data access

In one documented case, an AI chatbot exposed customer data across accounts due to weak access controls and model behavior issues.

This type of failure is difficult to detect because the system continues functioning normally.

The Common Pattern Across Industries

Across all sectors, the pattern is now well established:

- Data leaks through prompts, not exploits

- Outputs introduce risk even when systems appear stable

- AI expands attack surfaces inside workflows, not just networks

- External tools and APIs extend enterprise boundaries without visibility

2026 research confirms that AI risk is accelerating faster than governance maturity. Organizations are deploying AI widely, but control layers are still catching up.

Claude Mythos reflects this shift. Risk is no longer confined to infrastructure. It exists inside how AI systems process, interpret, and act on information.

Best Practices to Reduce Claude Mythos Cybersecurity Risk

Claude Mythos risks usually come from gaps in how AI systems are integrated, not from the model itself. Reducing exposure means controlling how data enters, how the model behaves, and how outputs are used inside workflows.

What Enterprises Should Put in Place

- Define AI-specific threat models: Go beyond infrastructure risk. Map threats across prompts, model behavior, APIs, and downstream actions. Include scenarios like prompt injection, data leakage, and output manipulation.

- Restrict external model access: Limit which teams, systems, and environments can call external AI services. Avoid sending sensitive data to public models unless isolation and contractual safeguards are in place.

- Use encrypted and controlled prompt channels: Ensure prompts are transmitted over secure channels and avoid logging sensitive inputs unnecessarily. Apply input filtering before requests reach the model.

- Enforce strong identity and access controls: Use role-based access, short-lived tokens, and service isolation. Avoid broad permissions for model-connected systems and rotate credentials regularly.

- Monitor model outputs continuously: Treat outputs as untrusted until validated. Detect anomalies such as sensitive data exposure, unexpected commands, or policy-violating responses.

- Audit AI workflows and data movement: Track how data flows through prompts, logs, APIs, and model responses. Maintain audit trails that can support compliance reviews and incident investigation.

- Introduce output validation layers: Add rule-based or secondary checks before model outputs trigger actions in production systems. This reduces the impact of hallucinations or unsafe responses.

This approach works because it focuses on real control points across the AI lifecycle, not just perimeter security.

Future of AI Security After Claude Mythos

Claude Mythos is forcing a shift from reactive security to systems that understand and control AI behavior in real time. The next phase is not about adding more alerts. It is about building controls that operate at the same speed and complexity as AI systems themselves.

AI-Native SOC Tools

Traditional SOC platforms analyze logs and network events. AI-native SOC tools go deeper into model activity.

They track prompt patterns, output behavior, and API interactions as first-class signals. Instead of only asking “was access authorized,” they ask “was the model behavior expected.”

This allows security teams to detect issues like prompt injection attempts or abnormal response patterns that would otherwise look like valid traffic.

Autonomous Threat Detection

AI systems introduce risks that change with inputs, not just infrastructure. Static rules struggle to keep up.

New AI models for thread detection are being built to:

- Identify unusual prompt structures

- Detect output anomalies in real time

- Flag behavior that deviates from expected model patterns

These systems learn from interaction data, making detection adaptive rather than rule-based.

Policy-Driven AI Governance

Security controls are moving closer to policy engines that define how AI systems should behave.

Instead of relying only on manual checks, enterprises are implementing:

- Policies that restrict what data models can access

- Rules that control how outputs can be used

- Enforcement layers that apply across APIs and workflows

This creates consistency across environments, even as systems scale.

Continuous Risk Scoring

AI risk is no longer static. It changes with every interaction, dataset update, or integration.

Enterprises are starting to measure risk continuously by evaluating:

- Prompt sensitivity levels

- Data exposure likelihood

- Model behavior under different conditions

This allows teams to move from periodic audits to real-time risk visibility.

What This Shift Means

AI security is moving away from perimeter defense toward behavior control. Systems will be evaluated not just on uptime and access, but on how safely they process and respond to information.

Claude Mythos is not just highlighting current gaps. It is shaping how future security systems will be designed.

AI security is changing fast, and most teams are still catching up.

If you’re planning ahead, now is the right time to act.

How Appinventiv Helps Enterprises Build Secure AI Systems at Scale

In most enterprise setups, AI security issues don’t come from a lack of tools. They show up when different parts of the system are put together in a hurry. Models get connected to APIs, data starts flowing, and only later do teams step back and ask how all of this is being controlled. Appinventiv’s cybersecurity services tend to approach this differently, by looking at the system as a whole before it goes into production.

At the architecture level, the focus is on putting some structure around how things connect. Instead of letting models directly interact with everything, environments are segmented, APIs are gated, and data pipelines are checked before anything reaches the model. It is less about locking everything down and more about making sure one weak point does not expose the entire system.

Governance is treated as something that runs with the system, not something that sits in a document. Access is defined based on roles, prompts are filtered, outputs are not left unchecked, and model changes are tracked over time. When something unusual happens, teams can actually trace it back instead of guessing which version or input caused it.

Monitoring also shifts a bit here. It is not just about uptime or API latency. The focus is on how the model is behaving. Are prompts starting to look unusual? Are outputs exposing more than they should? Is API usage changing in a way that does not match expected patterns? These are the signals that usually matter, and they are easy to miss without the right visibility.

Compliance, in practice, becomes much easier when it is built in early. Frameworks like GDPR, HIPAA, SOC 2, ISO 27001, and NIST still apply, but the challenge is mapping them to systems that do not behave in fixed ways. When controls are part of the design from the beginning, audits tend to be straightforward. When they are added later, gaps start to show up very quickly.

FAQs

Q. What is Claude Mythos in cybersecurity?

A. Claude Mythos is not a formal standard or framework. It is a way teams are describing a new kind of risk that shows up when AI systems are used in real environments. The issue is not just about system vulnerabilities. It is about how models behave when they process prompts, access data, and generate responses. That behavior can sometimes lead to exposure even when everything looks technically “secure.”

Q. Why is Claude Mythos important for enterprise AI security?

A. It matters because the risk is easy to overlook. Most security models are built around infrastructure, networks, and access. AI changes that. Now the risk sits inside how the system interprets inputs and produces outputs. A normal request can still lead to a problem if the model is not properly controlled. That is a different mindset for most security teams.

Q. How does Claude Mythos affect digital transformation efforts?

A. As AI gets added into products, workflows, and internal tools, it starts interacting with real data and decisions. That is where things get sensitive. The more connected the system becomes, the harder it is to track where data is going and how it is being used. Claude Mythos shows up in these gaps, especially when AI is deployed faster than the controls around it.

Q. What are the biggest AI cybersecurity risks right now?

A. A few patterns keep coming up across organizations:

- Sensitive data being shared through prompts without realizing it

- Models giving outputs that look correct but are actually misleading

- Prompt injection changing how the system behaves

- Weak access control between models and internal systems

- AI being used to create highly convincing phishing or fraud attempts

These are not always dramatic incidents. Most of them start small and grow over time.

Q. How can enterprises reduce AI security risk?

A. There is no single fix. What helps is putting controls at the right points in the system.

- Define risks around prompts, outputs, and integrations early

- Limit where and how external AI tools are used

- Add validation before inputs reach the model

- Keep an eye on outputs, not just inputs

- Maintain logs so behavior can be traced later

The idea is to manage how the system behaves, not just where it runs.

Q. Is Claude Mythos a real threat or just a concept?

A. It is more of a pattern than a single threat. The risks it describes are already being seen in real systems. Data exposure through prompts, unexpected outputs, and misuse of external AI tools are all happening today. Claude Mythos simply gives a name to that shift.

Q. Do existing cybersecurity frameworks cover these risks?

A. They help, but they do not cover everything. Traditional frameworks are still useful for access control and monitoring. The gap is that they were designed for systems that behave predictably. AI systems do not always follow fixed patterns, so additional controls are needed around prompts, outputs, and model behavior.