Key takeaways:

- Most AI voice agent challenges are architectural, not model-related: latency, context, integration, noise, and compliance.

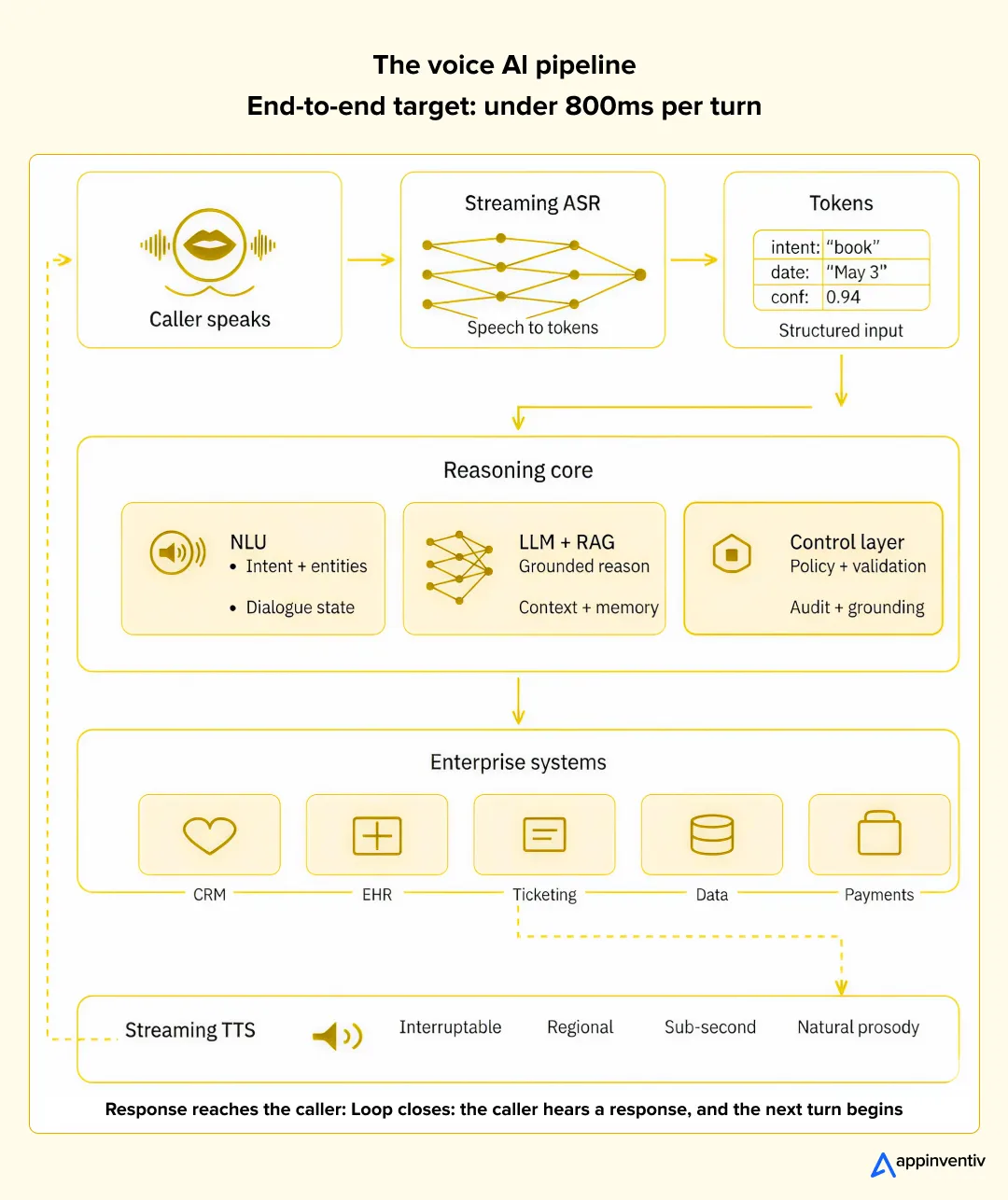

- A modular pipeline with streaming ASR, a control layer, and strict data governance is what separates demos from production.

- Voice AI fails louder than other AI. The customer hears it and hangs up.

Your voice agent demo was flawless. The board loved it. Then you rolled it out to 5% of inbound calls, and within a week, the containment rate was sitting at 31%, customers were getting cut off mid-sentence, and your contact center lead was quietly asking when you were going to “turn the thing off.”

Most AI voice agent challenges in production look nothing like the ones flagged during scoping. Dialogue loops. A 2.8-second pause before the bot responds. An ASR swap that places the wrong order. A cold handoff to a human who has no idea what was just discussed. Each one is fixable, and not a single one of them is the “AI” problem most teams assume they are.

Nearly every voice AI failure we’ve diagnosed over the last decade comes down to architecture, not model choice. Get the fundamentals right, and the same LLM that was struggling suddenly feels like a senior agent.

This piece walks through the eight categories that account for virtually every voice AI failure mode, what breaks inside each one, and how we fix it. If you’re planning to build an AI voice agent, it’s basically a checklist you will find handy.

We’ve fixed this exact failure mode before

300+ AI projects shipped, many voice-first. If your rollout is bleeding calls, we’ve probably debugged it already.

Why AI Voice Agents Fail: The Pattern We See Every Time

Most AI voice assistant challenges fall into six repeat offenders. None are new. All are avoidable.

| Failure Mode | What It Looks Like in Production | Root Cause |

|---|---|---|

| Poor conversational design & latency | Awkward pauses, rigid dialogue loops when users go off-script | No streaming, over-scripted flows |

| Failed turn-taking | The bot talks over users or freezes waiting for a turn that already happened | Weak end-of-speech detection, no barge-in |

| System integration failures | The bot understands the user, but fails to update the CRM or trigger the refund | Brittle connections, missing idempotency |

| Lack of context | Agent forgets turn 1 by turn 5; multi-step requests collapse | No session memory, weak dialogue manager |

| “Demo” syndrome | Works on scripted calls, breaks the second a real user deviates | Built for wow factor, not robustness |

| No human fallback | User stuck in a loop or dumped to dead tone | No graceful handoff path |

Gartner’s 2026 research found that 57% of failed AI initiatives stemmed from unrealistic expectations and 38% from poor data quality. These are scoping and governance problems that surface as engineering problems six months later.

The Voice AI Pipeline: Where Things Actually Break

Before the challenges, it helps to see the pipeline. Every voice agent has the same five layers, and every AI voice agent architecture challenge lives inside one of them.

Miss any layer — especially the control layer — and the system breaks under real traffic.

The 8 Biggest AI Voice Agent Implementation Challenges

1. How Does Latency Impact AI Voice Agent Performance?

Humans expect a conversational turn in under 800ms. Past 1.5 seconds, users assume something broke. This is the root of most voice bot performance issues we get asked to diagnose.

Where time leaks:

| Stage | Typical Latency | Primary Culprit |

|---|---|---|

| ASR (speech-to-text) | 150–400ms | Batch processing instead of streaming |

| NLU + LLM reasoning | 300–1500ms | Model size, no token streaming |

| Business logic/tool calls | 100–800ms | Slow downstream APIs |

| TTS (text-to-speech) | 150–400ms | Chunked instead of streaming |

How we solve it: Stream end-to-end. Tune end-of-speech detection per use case. Build interruptable TTS. Deploy regionally. Co-locate ASR, LLM, and TTS near the telephony edge. Cache predictable TTS responses. Parallelize tool calls. Monitor P95, not average.

Real-time voice AI challenges are a systems problem, not a model problem. A 13B model in a sloppy pipeline will always feel slower than a 70B model wired up right.

2. How Do You Handle Speech Recognition and Understanding Accurately?

ASR quality sets the ceiling for everything downstream. Wrong words in, wrong actions out.

| Common Problems in AI Voice Assistants | Fix |

|---|---|

| Accent and dialect variation (Indian, Scottish, Nigerian, Southern US) | Transfer learning on speaker-representative data |

| Domain vocabulary (drug names, SKUs, internal codes) | Phonetic lexicons, domain-specific acoustic training |

| Slang and code-switching | Multilingual ASR with code-switch handling |

| Speech disorders, elderly speech patterns | Longer utterance tolerance, adaptive prosody |

| AI hallucination problems from bad transcription | Confidence scoring + fallback (“could you repeat?”); grounding against verified data |

As noted in voice agent security standards across industries, these agents execute real transactions — a 5% transcription error on a shipping flow is a refund problem, but on a prescription refill, it’s a patient safety problem.

3. How Do You Manage Background Noise and Poor Acoustics?

Call centers have HVAC hum. Warehouses have forklift beeps. Drive-throughs have wind. Voice AI integration challenges multiply when the input isn’t clean.

How we solve it: Beamforming microphones, where we control hardware. Noise reduction at ingress (spectral subtraction + RNN denoising). Acoustic modeling trained on deployment-environment audio. Packet loss concealment for VoIP. Codec-aware tuning. Barge-in detection that distinguishes noise from speech.

Most voice AI system failures here trace back to training data that never saw the environment the bot lives in.

4. How Do You Manage Context and Conversation Flow?

Context management is where demos die on the way to production. Conversational AI voice agent problems here look like: the user says “cancel that one,” and the bot has to know what “that one” means three turns deep.

Six mechanisms working together:

| Mechanism | What It Does |

|---|---|

| Session memory | Persists state across turns within a call |

| Saved memory | Recognizes returning callers, skips redundant verification |

| Entity extraction | Pulls dates, amounts, names and IDs into a structured state |

| Intent resolution with confidence scoring | Ambiguous intents trigger clarification, not guessing |

| Goal-based flow design | Every turn is evaluated against a defined outcome |

| Dialogue management | Decides: proceed, clarify, escalate, end |

When we audit AI voice bot performance problems, the fix is almost always here. Teams reach for a bigger LLM when they need a stronger dialogue manager. What we have observed is that the problem often occurs in complicated projects where users are unpredictable. For instance, AI voice receptionist development.

The control layer isn’t optional. Neither is doing it right.

We’ve wired voice AI into CRMs, EHRs, and payment stacks without the postmortems. Let’s talk architecture before you ship.

5. What Does Voice AI Infrastructure and Integration Look Like in Production?

Enterprise voice AI deployment issues cluster around architecture. A sandbox bot collapses under production load because nobody designed for scale, failover, or the seven enterprise systems it needs to touch.

The architecture pattern: modular, swappable components (no vendor lock-in); customizable pipelines per use case; hybrid cloud-plus-edge deployment; streaming end-to-end with back-pressure handling; integration with Salesforce, Zendesk, Epic, Cerner, Twilio, Genesys.

In production, this is deployed as distributed microservices. Not a monolith. Not a single vendor’s black box. That modularity is what our AI agent development services team defaults to — it’s the only pattern we’ve seen survive a real rollout.

Integration patterns that hold up:

| Pattern | Prevents |

|---|---|

| Event-driven architecture | Downstream actions are blocking the conversation |

| Contract-first API design | Silent breakage when CRM schemas change |

| Idempotency keys on writes | Duplicate tickets from retried calls |

| Graceful degradation | Total failure when CRM is down |

| Unified customer context | Round-tripping multiple systems per turn |

This is where proper AI integration services pay back.

The control layer is the piece most teams skip and regret.

It sits between the LLM and everything else — the blast shield between model reasoning and your systems of record.

| Function | What It Prevents |

|---|---|

| Policy enforcement | LLM quoting off-approved prices or exceeding refund limits |

| Tool call validation | Malformed API calls reaching production |

| Grounding | Hallucinated facts leaking into customer conversations |

| Audit and observability | Silent failures; no root cause for incidents |

| Human-in-the-loop routing | Borderline cases causing damage |

| AI agent interoperability | Future agents break when you extend the system |

Skip the control layer, and you’ve wired an unpredictable LLM directly into your production database. Which is exactly how you end up on a postmortem.

6. How Do You Build Secure, Privacy-Compliant Voice AI?

Voice agent security cannot be bolted on after launch. Voice data is biometric in most jurisdictions. In US healthcare, it’s PHI.

Compliance map:

| Regulation | Scope | Key Requirements |

|---|---|---|

| HIPAA | US healthcare | BAAs, encryption, audit logs, minimum-necessary principle |

| GDPR | EU | DPAs, lawful basis, consent, right to erasure |

| BIPA / CUBI / CCPA-CPRA | US state biometric laws | Voiceprint protection, written consent |

| SOC 2 Type II / ISO 27001 | Enterprise procurement | Security controls, independent audit |

| PCI-DSS | Card data in voice flows | DTMF masking, tokenization |

What we embed from day one: consent capture at call open, tiered retention (raw audio expires fastest), tamper-evident audit logs, role-based access, biometric templates encrypted separately from audio, and no third-party LLMs that retain prompts.

Voice data should be handled as protected health information (PHI) across its entire lifecycle—from capture and processing to storage and deletion—including raw audio, transcripts, and system logs.

A HIPAA-aligned architecture for medical voice assistants typically emphasizes strict safeguards such as encryption, access controls, auditability, and data minimization, while a robust voice agent security model separates identity, authorization, data handling, and execution into independently governed and auditable layers.

Compliance-first voice AI, engineered from day one

HIPAA, GDPR, SOC 2, PCI-DSS — built in, not bolted on. Tell us your use case and we’ll scope the compliance posture too.

7. How Do You Handle Multilingual and Cross-Cultural Deployments?

Global rollouts multiply every other problem. A bot that works in Dallas will crash in Mumbai, São Paulo, or Berlin — not because of translation, but because of everything around it.

| What Breaks | Why |

|---|---|

| Accents within the same language | Different acoustic profiles, model bias toward North American English |

| Code-switching mid-sentence | Monolingual pipelines drop the secondary language |

| Cultural tone and norms | American casual warmth sounds unprofessional in German or Japanese contexts |

| Brand consistency | TTS voice character shifts across languages |

| Data scarcity | Thin training sets for less-resourced languages |

| Data residency (EU, India DPDP) | Can’t route audio to the US inference infrastructure |

| Entity mapping | Locale-specific addresses, dates and IDs choke generic extractors |

How we solve it: We don’t translate a master English flow. We build locale-specific conversation flows from scratch.

8. How Do You Design Voice AI for Real Human Factors?

AI voice automation challenges here look like: the bot is technically correct, but feels robotic. Users don’t forgive that.

| Design Pattern | What It Delivers |

|---|---|

| Adaptive pacing | TTS matches caller tempo (slower for the elderly, faster for the rushed) |

| Sentiment detection | Frustrated callers get a different routing than calm ones |

| Barge-in detection | Natural interruption without losing context |

| End-of-speech detection | No cutting off a thoughtful user mid-thought |

| Personalization | Returning callers skip redundant verification |

| Graceful escalation | Human agent receives transcript, intent, sentiment and state |

| Accent adaptation | System adjusts response style, not just transcription |

For AI impact on business to show up in the metrics, it has to show up in the call. Our dedicated AI engineers treat this as a first-class design concern, not an afterthought.

How Can Appinventiv Help You Out?

A decade building secure, compliance-heavy software across healthcare, fintech, retail, and enterprise. 300+ AI solutions delivered. 500+ digital health platforms. 75+ enterprise integrations. Deloitte’s Tech Fast 50 in both 2023 and 2024.

What we bring to AI voice agent development services engagements:

| Capability | What It Means For You |

|---|---|

| Production architecture, not demos | Modular streaming pipelines built to scale, not impress in a slide deck |

| Compliance by design | HIPAA, GDPR, SOC 2, PCI-DSS, BIPA engineered in from day one |

| Deep enterprise integration | CRM, EHR, ERP, telephony, payments — all connected, all audited |

| Multilingual deployment | Locale-specific flows with data residency handled properly |

| Evaluation and ops muscle | Eval harness, call sampling, versioned prompts, observability |

If you’re scoping a voice AI project, re-scoping a stalled one, or auditing a deployment that isn’t hitting its numbers, we can help. Our AI development services teams work with enterprise leaders across the US and globally.

Let’s talk. Schedule a consultation, and we’ll share what we’d build for your specific use case — architecture, cost range, timeline, and compliance posture.

FAQs

Q. Why do most AI voice agent projects fail?

A. Rarely the model. Usually, blown latency budgets, weak memory, poor enterprise integrations, or unrealistic scoping are the reasons behind failures. Gartner says 57% fail from rushed expectations, and 38% from bad data.

Q. What are the biggest challenges in AI voice agent implementation?

A. Real-time latency first. Then accents, noisy backgrounds, broken context, stubborn backend integrations, and compliance under peak load.

Q. What is the role of a control layer in AI voice agents?

A. Blast shield between the LLM and your systems. Enforces rules, checks tool calls, grounds answers and logs everything. Skip it, and you’ve wired an unpredictable model to production data.

Q. What causes integration issues in AI voice systems?

A. Brittle connections to legacy systems, no write safeguards, and total failure when downstream services blink. Fixed with event-driven design and contract-first APIs.

Q. How can businesses improve AI voice agent reliability?

A. Treat prompts like code. Measure call outcomes, run automated evals on every change, sample real calls weekly, and feed human-agent bug reports back into training.

Q. What are the common challenges faced by AI voice agents in customer service?

A. Accents, jargon, angry callers, missed escalation cues, and gamed success metrics.

Q. What are common hurdles in conversational AI agent deployment?

A. Bad data, tangled integrations, messy compliance, unclear ownership. Gartner: 60% of projects without AI-ready data will be abandoned through 2026.

Q. How do you overcome voice recognition accuracy issues in virtual assistants?

A. Streaming ASR with custom vocabularies and phonetic lexicons. Confidence-based fallbacks so the LLM doesn’t hallucinate around bad input.

Q. What are the main privacy concerns with AI-powered voice interfaces?

A. Voice is biometric. GDPR, HIPAA, BIPA all apply. Consent, retention windows, biometric template protection, and vendor prompt-retention policies are the four big worries.

Q. What are the best practices for mitigating latency in real-time voice AI interactions?

A. Stream everything. Co-locate near the telephony edge. Smaller grounded models beat bigger ungrounded ones. Monitor P95, not average.

Q. Can I integrate AI voice agents with my existing CRM system?

A. Yes — and it’s the hardest part of the job. Event-driven architectures and contract-first APIs are how we pull context from CRM, helpdesk, and billing into one session.