Question:

MoE models contain far more parameters than Transformers, yet they can run faster at inference. How is that possible?

Difference between Transformers & Mixture of Experts (MoE)

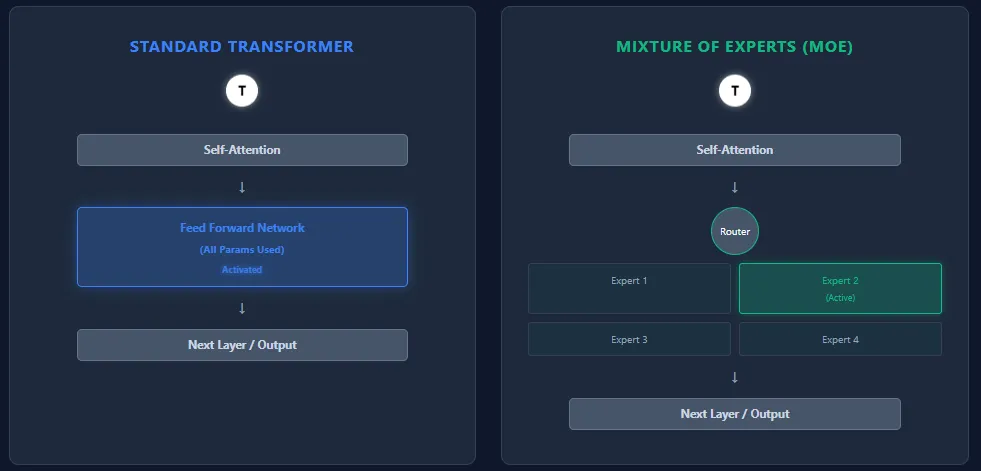

Transformers and Mixture of Experts (MoE) models share the same backbone architecture—self-attention layers followed by feed-forward layers—but they differ fundamentally in how they use parameters and compute.

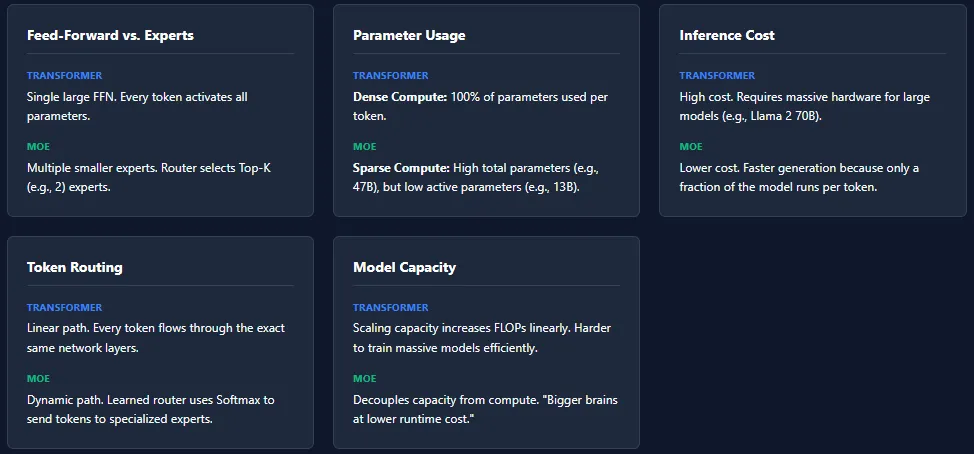

Feed-Forward Network vs Experts

- Transformer: Each block contains a single large feed-forward network (FFN). Every token passes through this FFN, activating all parameters during inference.

- MoE: Replaces the FFN with multiple smaller feed-forward networks, called experts. A routing network selects only a few experts (Top-K) per token, so only a small fraction of total parameters is active.

Parameter Usage

- Transformer: All parameters across all layers are used for every token → dense compute.

- MoE: Has more total parameters, but activates only a small portion per token → sparse compute. Example: Mixtral 8×7B has 46.7B total parameters, but uses only ~13B per token.

Inference Cost

- Transformer: High inference cost due to full parameter activation. Scaling to models like GPT-4 or Llama 2 70B requires powerful hardware.

- MoE: Lower inference cost because only K experts per layer are active. This makes MoE models faster and cheaper to run, especially at large scales.

Token Routing

- Transformer: No routing. Every token follows the exact same path through all layers.

- MoE: A learned router assigns tokens to experts based on softmax scores. Different tokens select different experts. Different layers may activate different experts which increases specialization and model capacity.

Model Capacity

- Transformer: To scale capacity, the only option is adding more layers or widening the FFN—both increase FLOPs heavily.

- MoE: Can scale total parameters massively without increasing per-token compute. This enables “bigger brains at lower runtime cost.”

While MoE architectures offer massive capacity with lower inference cost, they introduce several training challenges. The most common issue is expert collapse, where the router repeatedly selects the same experts, leaving others under-trained.

Load imbalance is another challenge—some experts may receive far more tokens than others, leading to uneven learning. To address this, MoE models rely on techniques like noise injection in routing, Top-K masking, and expert capacity limits.

These mechanisms ensure all experts stay active and balanced, but they also make MoE systems more complex to train compared to standard Transformers.