In this article, you will learn about seven leading LLM observability tools that help AI engineers monitor, evaluate, and debug large language model applications running in production.

Topics we will cover include:

- What LLM observability is and why it matters for production AI systems.

- The core capabilities of each tool, including tracing, evaluation, cost tracking, and prompt management.

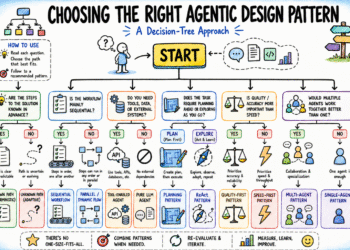

- How to choose the right tool based on your stack, team size, and immediate priorities.

LLM Observability Tools for Reliable AI Applications

Introduction

Large language models (LLMs) now power everything from customer service bots to autonomous coding agents. Getting them to work in a demo is one thing, but keeping them working reliably at scale is another. Responses can degrade in quality over time, costs can spike without warning, and a bad prompt change can affect many users before anyone notices.

LLM observability tools give you visibility into what your models are actually doing in production. They trace every step of a request through your application, evaluate output quality against defined criteria, track token costs per user and session, and surface regressions before they compound. Unlike general-purpose monitoring, they understand the structure of LLM calls — prompts, completions, tool use, retrieval steps — and give you metrics that map directly to those concepts.

As an AI engineer shipping LLM-powered applications, you need tools that handle:

- Distributed tracing across chains, agents, and tool calls

- Output quality evaluation

- Cost and token usage tracking across users and sessions

- Prompt versioning and regression testing

- Production alerting and debugging workflows

Let’s explore each tool.

1. LangSmith

LangSmith, built by the LangChain team, covers the full development and production lifecycle for LLM applications. It’s the most tightly integrated option for teams running LangChain or LangGraph.

Here’s what makes LangSmith a strong choice for LLM observability:

- Captures every agent decision, tool call, and intermediate step in a visual trace, making it straightforward to find exactly where a chain or agent went wrong

- Supports both offline evaluation against curated datasets before deployment and online evaluation of live production traffic, letting you catch quality regressions before and after shipping

- Works beyond the LangChain ecosystem; integrates with the OpenAI SDK, Anthropic SDK, CrewAI, Pydantic AI, LlamaIndex, and any OpenTelemetry-compatible setup

- Includes human annotation queues, LLM-as-judge scoring, heuristic checks, and custom evaluators in Python or TypeScript for flexible evaluation pipelines

- Offers cloud-hosted, bring-your-own-cloud, and fully self-hosted deployment for teams with data residency requirements

LangSmith Docs and the LangSmith Cookbook on GitHub are good starting points for hands-on examples.

Best for: Teams using LangChain or LangGraph who want the deepest native integration, and teams that want tracing and evaluation in a single platform.

2. Langfuse

Langfuse is the leading open-source LLM observability platform, covering tracing, prompt management, evaluation, and datasets in a single tool. It can be self-hosted entirely for free, making it the default choice for teams with data sovereignty or compliance requirements.

What makes Langfuse a strong choice for open-source observability:

- Released under an MIT license, it can be self-hosted with no usage limits, licensing fees, or vendor dependency

- Built on OpenTelemetry standards, so it integrates naturally with existing observability infrastructure and distributed tracing setups

- Treats prompt management as a first-class concern, so teams can version, deploy, and compare prompts, then track how changes affect evaluation scores over time

- Supports LLM-as-judge scoring, human annotation, and custom metrics for both online (production) and offline (dataset) evaluation

- Integrates with LangChain, LlamaIndex, CrewAI, Haystack, and direct API calls across all major model providers

The Langfuse Documentation and Langfuse Cookbook on GitHub provide practical integration guides for most frameworks.

Best for: Teams that want open-source flexibility, those with compliance or data privacy constraints, and developers who want comprehensive features without vendor lock-in.

3. Arize Phoenix

Arize Phoenix is an open-source observability and evaluation platform built by Arize AI. It’s designed around OpenTelemetry and the OpenInference tracing convention from the start, which means traces can flow to any compatible backend and not just the Arize platform.

Here’s why Phoenix is a strong choice for evaluation-focused and RAG-heavy applications:

- Built on OpenTelemetry and OpenInference, giving teams full data portability and avoiding lock-in at the instrumentation layer

- Provides out-of-the-box instrumentation for OpenAI Agents SDK, Anthropic SDK, LangGraph, CrewAI, LlamaIndex, and Vercel AI SDK, among others

- Includes dedicated retrieval-augmented generation (RAG) evaluation metrics covering retrieval relevance, document chunk visualization, and query analysis, which is particularly useful for diagnosing retrieval pipeline failures

- Captures complete multi-step agent traces and supports structured evaluation workflows for assessing how agents reason and act across turns

- Runs locally in a notebook, Docker container, or Kubernetes cluster, with an optional managed deployment through the Arize AX enterprise platform

The Arize Phoenix Documentation and Phoenix Tutorials on GitHub cover both quick setup and advanced evaluation patterns.

Best for: Teams building RAG-heavy applications, those that need strong evaluation tooling, and engineers who want full data control with an optional enterprise upgrade path.

4. Datadog LLM Observability

Datadog’s LLM Observability module extends its unified monitoring platform into AI applications. For organizations already running Datadog for infrastructure, APM, and logs, this can be a great choice for adding observability to LLM-powered applications.

What makes Datadog a strong choice for enterprise LLM monitoring:

- Auto-instruments OpenAI, Anthropic, LangChain, and Amazon Bedrock calls with no code changes, immediately capturing latency, token usage, and errors

- Correlates LLM traces directly with infrastructure metrics, so a latency spike in an LLM call can be traced to a database issue or resource constraint in the same dashboard

- Includes production-grade alerting with anomaly detection, threshold alerts, and integrations with PagerDuty and Slack

- Built-in security scanning flags prompt injection attempts and helps identify data leaks in production traffic

Datadog’s LLM Observability Documentation and Automatic Instrumentation for LLM Observability are good places to get started.

Best for: Enterprises already using Datadog who want LLM behavior tied directly to infrastructure health without introducing a new vendor.

5. Lunary

Lunary is an open-source LLM observability platform focused on making production monitoring accessible without heavy setup or overhead. It covers tracing, cost tracking, user analytics, and evaluation in a lightweight package that can be self-hosted or run on managed cloud.

Here’s why Lunary works well for teams that want fast, low-friction observability:

- Captures traces, user sessions, and conversation threads with minimal instrumentation

- Tracks token usage and costs per user, per session, and per model, making it practical to understand spending patterns before they become a problem

- Includes a built-in prompt playground and version management, so prompt changes can be tested and compared without leaving the platform

- Supports human feedback collection directly from end users, feeding evaluation signals from real interactions rather than only from internal annotation

- Besides a Python SDK and native integration with LangChain JS, it supports multiple JavaScript runtimes

The Lunary Documentation and Lunary GitHub repository are good starting points for setup and self-hosting.

Best for: Early-stage teams that want immediate observability with minimal engineering investment, and developers who need cost tracking and user analytics alongside tracing.

6. TruLens

TruLens, developed by TruEra, is an open-source framework built specifically around evaluation. Where most observability tools treat evaluation as one feature among many, TruLens makes it the central workflow, with a particular focus on RAG pipelines and grounding LLM outputs in retrieved evidence.

Here’s why TruLens is a strong choice for evaluation-first workflows:

- The TruLens RAG Triad provides three core metrics — answer relevance, context relevance, and groundedness — giving a structured way to evaluate whether RAG pipelines are actually retrieving and using evidence correctly

- Supports LLM-as-judge evaluation using any model as the evaluator, with built-in feedback functions covering hallucination detection, toxicity, sentiment, and custom criteria

- Integrates with LlamaIndex and LangChain, and works with any Python-based LLM application through a decorator-based pattern

- Records all evaluation results in a local database and provides a dashboard for comparing runs, tracking metrics over time, and identifying which changes helped or hurt quality

- Works entirely locally with no data leaving your environment unless you choose to use the managed TruEra platform

The TruLens Documentation and TruLens GitHub repository are practical starting points, along with the RAG Triad guide for evaluation-focused projects.

Best for: Teams building RAG applications who need rigorous output evaluation, and developers who want a dedicated evaluation framework rather than evaluation bolted onto a monitoring tool.

7. Helicone

Helicone takes a different integration approach from every other tool on this list: rather than SDK instrumentation, it works as an HTTP proxy. You point your LLM API calls at Helicone’s endpoint instead of the provider’s endpoint directly, and logging happens automatically with no code changes beyond updating a base URL.

Here’s why Helicone works well for teams that want observability up and running fast:

- The proxy-based approach means you can go from zero visibility to full request logging in minutes, without restructuring application code or adding instrumentation logic

- Tracks token usage and costs per request, per user, and per session, making it practical to monitor spending patterns across different parts of an application

- Includes request caching at the proxy layer, which can reduce API costs for applications with repeated or similar queries

- Supports per-user rate limiting and usage tracking, useful for multi-tenant applications where you need to manage consumption across different customer segments

- Open source and fully self-hostable for teams with data privacy requirements

Helicone’s Documentation and the Helicone GitHub repository cover setup, self-hosting, and advanced configuration. To get started, check out 4 Essential Helicone Features to Optimize Your AI App’s Performance.

Best for: Teams that want observability running with minimal code restructuring, and early-stage products where cost tracking and request logging are the immediate priority.

Wrapping Up

These tools cover LLM observability from different angles, and the right choice depends on your stack, team size, and what you need most right now.

| Tool / Platform | Best Use Case |

|---|---|

| LangSmith | Lowest-friction starting point for teams already working within the LangChain ecosystem |

| Langfuse | Strong open-source option for teams that want full control over infrastructure and data sovereignty |

| Arize Phoenix | Another strong open-source observability platform suitable for teams prioritizing control and transparency |

| Datadog LLM Observability | Best suited for enterprises already using Datadog, allowing them to add LLM monitoring without introducing another vendor |

| Lunary | Good choice for teams that want fast setup along with clear cost tracking and usage visibility |

| Helicone | Lightweight solution focused on quick integration and strong visibility into LLM costs and request monitoring |

| TruLens | Purpose-built for evaluation workflows, especially useful for teams building and assessing RAG-based applications |

To build practical experience, here are a few project ideas to explore these tools hands-on:

- Instrument a LangGraph research agent with LangSmith and build an evaluation dataset from its production traces

- Self-host Langfuse and connect it to a multi-provider application that routes between OpenAI and Anthropic

- Use Arize Phoenix to evaluate a RAG pipeline with the retrieval relevance and groundedness metrics

- Set up Datadog LLM Observability on an existing application and create a dashboard correlating LLM latency with infrastructure metrics

- Build a customer-facing chatbot with Lunary to track per-user costs and collect inline feedback

- Evaluate a RAG application end-to-end with TruLens using the RAG Triad and compare two retrieval configurations

- Add Helicone to an existing OpenAI integration and enable caching to measure cost reduction on repeated queries

Happy building!