Key takeaways:

- Autonomous AI agents are entering production systems, which increases enterprise operational and compliance risk.

- Governance must shift from model oversight to runtime supervision of autonomous AI actions.

- Enterprise AI governance requires lifecycle controls, observability systems, and clear decision accountability.

- Layered governance architecture controls agent identity, system access, monitoring, and compliance oversight.

- Structured governance frameworks allow enterprises to scale autonomous AI safely across business units.

Autonomous AI agents now execute real business tasks inside enterprise systems. Industry forecasts suggest that 15% of daily business decisions could be handled autonomously by 2028, showing how quickly autonomous systems are entering operational environments.

Many organizations have moved beyond pilots and deployed agents in production. These systems call APIs, access enterprise data, trigger workflows, and interact with financial platforms. They support procurement, IT operations, fraud monitoring, and customer service. While this autonomy improves speed and scale, it also increases operational and compliance risk.

Traditional AI governance focused on predictive models reviewed by humans before action. That model no longer holds. Autonomous agents act in real time, creating a clear governance gap across enterprise environments.

Organizations now require a governance model that controls AI decision autonomy and system access while ensuring accountability for every action. Strong oversight reduces regulatory exposure and protects critical operations.

An agentic AI governance framework addresses this shift through technical controls, policy oversight, and enterprise risk management. This AI governance implementation guide outlines how to build an enterprise AI governance framework for autonomous systems, including governance architecture and practical adoption steps.

Autonomous AI Needs Governance

79% of organizations report adopting AI agents across enterprise operations.

Key Components of Agentic AI Systems

Agentic AI systems rely on several technical components that allow software agents to interpret goals, reason through tasks, and execute actions across enterprise systems. Unlike traditional models that only produce predictions, AI agents plan actions and complete multi-step workflows across applications and data services.

These systems combine reasoning engines, memory layers, orchestration logic, and integration connectors, making enterprise AI agents fundamentally different from traditional automation tools that interact with enterprise infrastructure.

Governance controls such as agent IAM, agent identity and scope definition, and agent permissions regulate what each agent can access and what actions it can perform.

| Component | Role |

|---|---|

| AI Agents | Software systems that perform tasks across enterprise tools |

| Goal Interpretation | Converts instructions into executable tasks |

| Reasoning Engines | Determines how an agent completes tasks |

| Memory | Stores context and prior interactions |

| Multi-Step Workflows | Sequences of coordinated actions |

| Orchestration | Manages workflow execution across tools |

| Agent Connector | Connects agents to APIs and enterprise systems |

| Agent IAM | Identity management for AI agents |

| Agent Identity and Scope Definition | Defines system access boundaries |

| Agent Permissions | Limits allowed actions |

| KPMG TACO Framework | Model for trust, accountability, control, and oversight |

How Autonomous AI Systems Change Enterprise Governance Requirements

Autonomous AI systems require governance that supervises real operational actions across enterprise systems.

Earlier AI governance focused on models and datasets. Teams reviewed training data, validated model accuracy, and tracked model drift. Human teams still controlled the final decision. The model produced a prediction, and a person approved the action.

Autonomous agents change this structure, and as agent interoperability expands, modern agents operate across multiple enterprise systems and perform tasks without waiting for manual approval. Many organizations now deploy agents across functions from customer service to procurement that perform actions such as:

- Execute financial or operational transactions

- Interact with internal and external APIs

- Coordinate multi-step business workflows

- Make operational decisions across systems

These actions occur inside live infrastructure. Each action touches data, systems, or customer processes. The risk profile shifts from model error to operational impact.

Governance must now supervise system behavior at runtime. Model validation alone cannot control what an autonomous agent does once it begins executing tasks. Enterprises require three operational controls:

- Runtime monitoring that records every agent action

- Authorization controls that approve or block system access

- System accountability that traces each decision and action

These controls define autonomous system governance. The objective is direct supervision of AI actions across infrastructure, workflows, and enterprise data.

The governance scope expands in several areas.

| Traditional Governance | Agentic Governance |

|---|---|

| Model validation | Action governance |

| Data bias monitoring | Decision accountability |

| Model lifecycle control | Autonomous system control |

Enterprises now design governance for agentic AI systems that manage both AI models and the operational decisions those models execute inside enterprise environments.

Also Read: 20+ AI Agent Business Ideas To Start With No Money

Enterprise Risk Exposure Created by Agentic AI Systems

Agentic AI systems expand enterprise risk because autonomous agents can act inside critical business systems.

Industry adoption continues to accelerate. 79% of organizations report deploying AI agents, and 88% plan to increase AI budgets in the next year. As more enterprises introduce autonomous systems into production environments, AI governance risk management becomes a critical operational priority.

Earlier AI deployments produced reports, forecasts, or alerts. Human teams reviewed the output and decided what action to take. Autonomous agents remove that step. They access systems, run tasks, and execute decisions across operational environments.

Enterprise leaders must view agentic AI through a risk management lens. These systems interact with infrastructure, financial processes, and regulated data. Each autonomous action carries operational and regulatory consequences.

Autonomous Decision Risk

Autonomous agents can take actions that directly affect business operations. A supply chain agent may approve orders. A customer service agent may update billing records. A financial monitoring agent may freeze accounts during fraud detection, often without any manual approval, once the system is active.

These decisions occur without manual approval once the system is active. Organizations must track how agents reach decisions and who authorized the agent to act. Strong AI decision accountability frameworks create a clear record of authority, actions, and outcomes.

Security and Infrastructure Risk

Agentic systems connect to core enterprise infrastructure. They interact with services such as:

- Internal APIs

- Operational databases

- Enterprise applications

- Financial systems

Unauthorized access or faulty logic can disrupt business systems. Security teams must apply strict AI agent security mechanisms that govern system access, tool permissions, and runtime activity.

Compliance and Regulatory Risk

Autonomous systems often interact with sensitive data and regulated processes. Many industries must follow strict regulatory requirements, including:

- Data protection laws such as GDPR and CCPA that increasingly shape AI in data governance.

- Financial compliance rules

- Industry regulations in healthcare, banking, and insurance

Organizations must deploy AI compliance frameworks for enterprises that record decisions, track data access, and produce audit logs for regulators.

Operational and Financial Risk

Autonomous agents can trigger unintended actions across enterprise workflows. A faulty agent may generate incorrect transactions, modify system records, or launch large numbers of automated tasks.

This risk is especially acute in agentic AI in banking, where strong governance and enterprise AI risk management solutions must monitor agent behavior and halt suspicious activity before it spreads.

Core Dimensions of an Enterprise Agentic AI Governance Framework

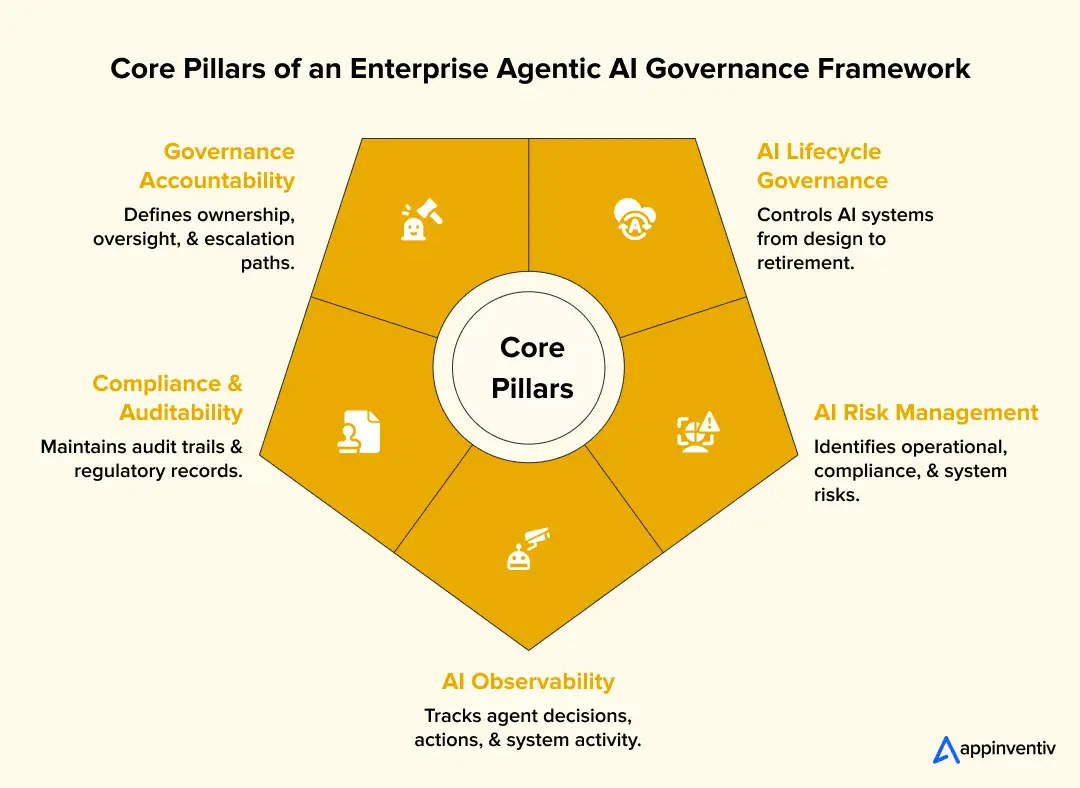

A clear governance structure rests on five pillars that control autonomous AI systems across their full operating lifecycle. Enterprises building and deploying agents through AI agent development services often introduce new automation capabilities across enterprise systems.

These pillars give enterprises a practical model for supervising autonomous agents across infrastructure, data systems, and business workflows and form the foundation of a responsible framework. Each pillar addresses a distinct control layer inside an agentic AI governance framework.

Pillar 1 – AI Lifecycle Governance

Governance must span the entire lifecycle of an autonomous system. Controls begin at system design and continue through production operation.

Lifecycle supervision covers:

- Design and system architecture

- Deployment approval and testing

- Production monitoring

- System retirement or replacement

This structure forms the foundation of AI lifecycle governance across enterprise AI programs.

Pillar 2 – AI Risk Management Framework for Enterprises

This classification becomes especially critical in regulated sectors. For example, healthcare AI systems face compliance risk tied to patient data that must be managed through the same structured risk model.

Risk categories include:

- Operational risk from system actions

- Compliance risk linked to regulated data

- Reputational risk tied to AI decisions

- System risk from infrastructure interactions

This model creates an AI risk management framework for enterprises that aligns AI governance with enterprise risk management.

Pillar 3 – AI Observability and Monitoring

Autonomous agents require constant visibility during operation, which is why AI guardrails and real-time monitoring must be built in from the start.

Monitoring systems must record:

- Agent decisions

- System actions

- External tool usage

These capabilities form the core of observability and monitoring for enterprise AI systems.

Pillar 4 – Compliance and Auditability

Autonomous systems must produce records that support regulatory oversight and internal review.

Audit capabilities include:

- Traceable decision logs

- Compliance validation checks

- Regulatory reporting records

These mechanisms support strong AI compliance and auditability across enterprise operations, a requirement that is particularly demanding in agentic AI in finance where audit trails directly intersect with regulatory reporting.

Pillar 5 – Governance Accountability Framework

Governance requires clear organizational ownership, and teams that follow a responsible AI checklist find it easier to ensure each autonomous system operates under defined supervision.

This structure defines:

- System ownership

- Escalation procedures

- Executive oversight

These responsibilities form the enterprise AI governance operating model.

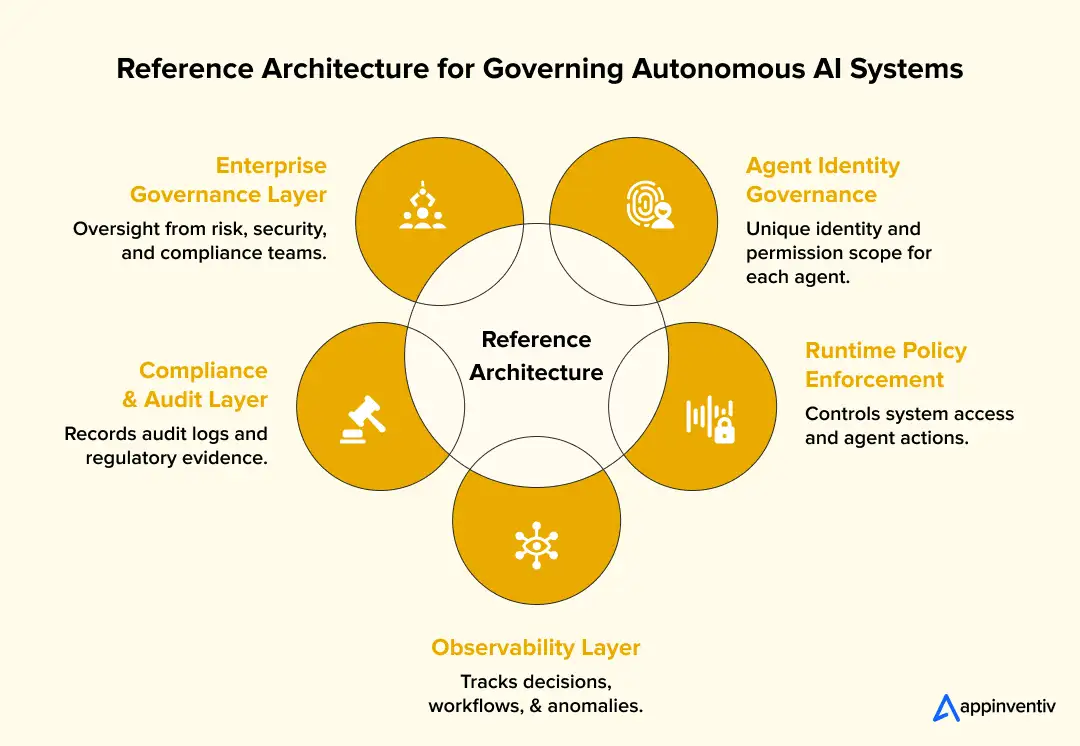

Reference Architecture for Governing Autonomous AI Systems

Enterprises govern autonomous agents through a layered AI governance architecture that supports an agentic AI governance framework supervising identity, actions, monitoring, and compliance.

Analysts expect one-third of enterprise software applications to include agentic AI by 2028, and as AI agent development costs continue to decrease, adoption will only accelerate, making governance controls across enterprise infrastructure more urgent.

This architecture places technical controls around every agent action inside enterprise systems and enables structured AI governance for autonomous agents operating across enterprise infrastructure. Each layer enforces a specific form of control that limits risk and maintains operational accountability.

Layer 1 – Agent Identity Governance

Every autonomous agent must operate under a verified system identity. Identity controls enable organizations to treat AI agents as managed actors inside enterprise infrastructure.

Identity governance defines:

- Unique identity credentials for each agent

- Defined permission scope across enterprise systems

- Restricted access to APIs, data stores, and applications

These controls prevent unauthorized access and create traceability for every agent action.

Layer 2 – Runtime Policy Enforcement

Runtime policies control what actions an agent may perform once it begins operating.

Policy enforcement systems regulate:

- System access permissions

- Action authorization before execution

- Operational limits such as transaction thresholds

These runtime rules serve as core AI safety and control mechanisms for autonomous systems.

Layer 3 – Observability and Monitoring Layer

Autonomous agents require continuous monitoring during operation. Monitoring systems record the full operational trail of agent activity.

Monitoring systems capture:

- Decision chains across reasoning steps

- Workflow execution across systems

- Anomaly detection for unusual activity

These capabilities form the backbone of enterprise AI observability and monitoring.

Layer 4 – Compliance and Audit Layer

Enterprises must maintain records that support internal review and regulatory oversight, which is why many invest in compliance management software purpose-built to handle audit trails and reporting at scale.

Audit infrastructure produces:

- Detailed audit trails for agent actions

- Compliance records for regulatory reporting

Layer 5 – Enterprise Governance Control Layer

Enterprise leadership defines policies governing autonomous systems.

Oversight typically comes from:

- Risk committees

- Security teams

- Compliance officers

This supervisory layer anchors the full AI governance architecture inside enterprise governance structures.

Design Secure AI Architecture

Align governance architecture, identity controls, and runtime policies for enterprise AI agents.

Enterprise AI Governance Operating Model

Enterprises control autonomous AI through a clear governance structure that assigns responsibility and oversight across leadership teams and establishes a governance model for AI agents operating across enterprise systems.

Autonomous agents affect core systems, customer data, and financial processes. A firm cannot manage that risk through technical controls alone. Leadership teams must define who approves AI systems, who monitors them in production, and who responds when problems occur.

A defined structure forms the backbone of an enterprise AI governance strategy.

This shift changes how governance operates across systems, moving from model oversight to real-time control of autonomous actions.

Key Differences in Agentic Governance

| Traditional AI Governance | Agentic AI Governance |

|---|---|

| Focus on model validation | Focus on real-time action governance |

| Human-in-the-loop decisions | Autonomous execution with controlled oversight |

| Pre-deployment checks | Continuous runtime monitoring |

| Static risk evaluation | Dynamic, system-level risk management |

| Limited system interaction | Deep integration across enterprise systems |

| Output accountability | End-to-end decision accountability |

These differences shape how enterprises structure governance roles, workflows, and accountability across autonomous systems.

Governance Leadership

Executive leadership sets direction and accountability for enterprise AI programs. These leaders approve high-impact deployments and define governance policies.

Typical leadership roles include:

- Chief AI Officer who directs enterprise AI programs

- Risk and compliance leaders who supervise regulatory exposure

- Enterprise architecture teams that review system design and infrastructure impact

This group sets policy and reviews deployments that affect critical operations.

Governance Committees

Large organizations often create oversight groups that review autonomous systems from multiple viewpoints.

Common governance bodies include:

- AI ethics boards that review responsible AI practices and data use

- AI risk committees that evaluate operational risk and system impact

These groups include representatives from technology, legal, risk, and business leadership.

Governance Workflows

Governance also depends on repeatable operational processes. These processes guide how systems move from development to production.

Core workflows include:

- Deployment approval before a system enters production

- Risk review during development and testing

- Compliance monitoring once systems run in production

These structured processes form the operational core of the enterprise AI governance operating model.

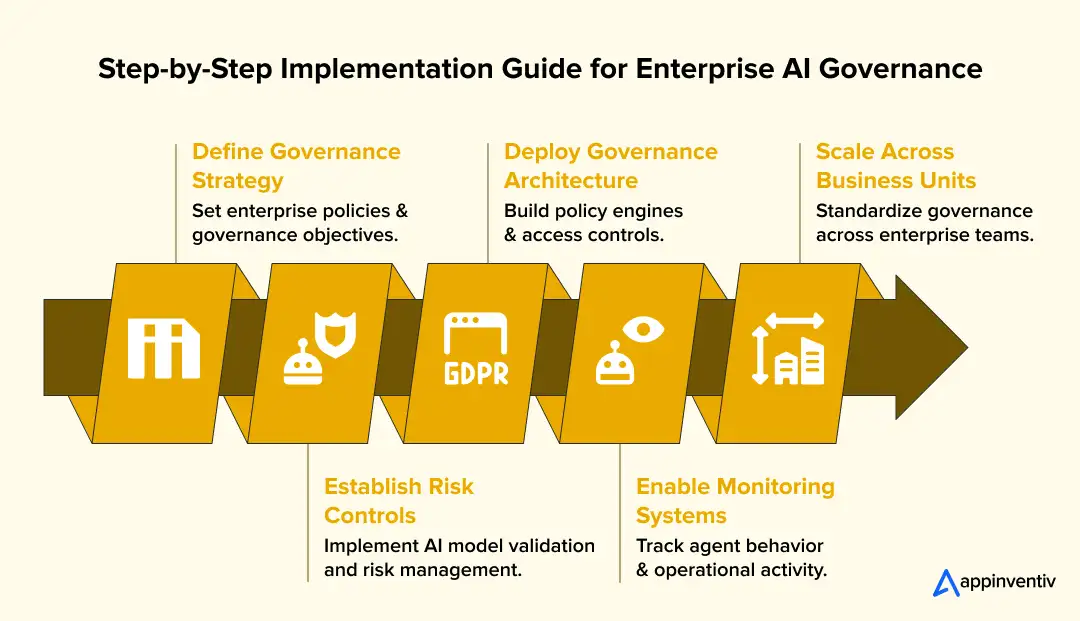

Step-by-Step Implementation Guide for Enterprise AI Governance

Enterprises build an agentic AI governance framework through a sequence of policies, engineering controls, and operational monitoring.

Many firms start with isolated pilots. Real governance begins when those systems enter production and interact with core infrastructure. Each step below turns governance from policy language into enforceable technical controls.

Step 1: Define Enterprise Governance Strategy

Leadership must set clear rules before autonomous systems operate inside enterprise environments. This step establishes priorities for building an AI governance framework that aligns with enterprise risk programs.

Typical actions include:

- Define which AI systems require executive approval

- Classify systems by operational and regulatory risk

- Assign ownership across product, security, and compliance teams

- Define approval gates for production deployment

Many enterprises document these policies inside internal AI governance charters reviewed by executive leadership and legal teams.

Step 2: Implement AI Risk Management and Model Controls

Technical teams must evaluate models before deployment. These controls support structured AI model risk management.

Engineering teams perform checks such as:

- Model validation using holdout datasets and performance benchmarks

- Dataset lineage checks through tools like DataHub or Apache Atlas

- Automated testing for edge cases in agent workflows

- Version control for models through systems such as MLflow or SageMaker Model Registry

These controls reduce operational risk before an agent executes tasks in production, mirroring practices long established in financial software compliance programs.

Step 3: Deploy AI Governance Architecture

For organizations still running older systems, legacy modernization is often a necessary first step before infrastructure controls that regulate agent access can be properly deployed. This layer forms the core AI governance architecture.

Organizations deploy components such as:

- Policy engines, such as Open Policy Agent, are used to evaluate agent actions

- Identity systems that issue credentials through IAM platforms

- API gateways that regulate system interactions, building on the same AI API integration principles used across digital transformation programs.

These controls restrict what an agent can do inside enterprise systems.

Step 4: Implement AI Observability and Monitoring Systems

Production systems require full operational visibility. Monitoring tools provide observability and monitoring across agent workflows.

Monitoring platforms record:

- Decision logs from LLM orchestration layers

- API activity across service calls

- Abnormal behavior detected through anomaly detection pipelines

Engineering teams often connect these logs with systems such as Datadog, Grafana, or OpenTelemetry pipelines.

Step 5: Scale Governance Across Business Units

Governance must expand once multiple teams deploy autonomous systems. Organizations standardize governance processes for scaling AI governance in enterprises.

Common actions include:

- Centralized dashboards for monitoring agents across departments

- Shared governance policies across engineering teams

- Internal review boards for high-risk deployments

This structure allows large enterprises to supervise autonomous systems across the organization without losing operational control.

Challenges in AI Governance Framework Implementation

Enterprises struggle with several practical obstacles when implementing an agentic AI governance framework once autonomous AI systems move into daily operations.

Analysts estimate over 40% of agentic AI projects may be abandoned by 2027, which shows how difficult it is to scale autonomous systems without strong governance.”

Many organizations already run AI across many teams and products. Governance becomes difficult once agents interact with core systems and sensitive data. These AI governance framework implementation challenges usually appear in four areas.

Fragmented Governance Across Departments

Different teams often build AI systems without shared standards. This fragmentation is especially visible in agentic AI SaaS environments, where data science teams, product teams, and platform engineers often deploy AI capabilities under entirely different standards.

Risk teams then lack a clear view of what exists. Many firms address this through a central AI governance office. This group publishes system approval rules, model documentation requirements, and shared review processes. Teams still build systems locally, but governance rules remain consistent.

Lack of Visibility Into AI Decisions

Autonomous systems can run hundreds of actions each minute. Without strong logging, teams cannot explain why a system behaved a certain way. Engineering teams solve this by recording every system action. This is where agentic data engineering practices matter most, as structured pipelines are needed to log prompts, model versions, API calls, and workflow steps reliably. These logs allow investigators to reconstruct decisions during audits or incidents.

Scaling Governance Infrastructure

Manual review slows development once many agents run across departments. Enterprises solve this by introducing automated controls as part of broader enterprise workflow integration programs, where policy engines and continuous monitoring are deployed together.

Balancing Innovation and Governance

Engineering teams want rapid experimentation. Risk teams want strong control. Many organizations manage this tension through risk tiers. Internal tools with limited impact move through a lighter review. Systems that affect finance, healthcare data, or customer accounts must also satisfy cloud compliance requirements and receive deeper governance checks before deployment.

Operationalize Enterprise AI Governance

Implement policy controls, monitoring systems, and governance processes for autonomous AI systems.

Best Practices for Responsible AI Governance in Enterprises

Enterprises maintain safe autonomous AI systems through disciplined engineering practices and clear operational controls.

Governance must start during system design. Teams cannot add governance controls after agents enter production environments. Strong programs embed governance rules into development workflows and infrastructure from the beginning.

Governance by Design

Engineering teams should integrate governance controls during system development. Architects define access permissions, system boundaries, and logging requirements before deployment.

Key practices include:

- Restricting system access through identity and permission controls

- Defining operational limits for agents, such as transaction thresholds

- Documenting system purpose, ownership, and data access

These steps create a foundation for responsible AI development.

Runtime Monitoring

Autonomous systems require continuous monitoring once deployed. Monitoring systems record how agents behave inside production environments.

Operational monitoring typically tracks:

- System actions across APIs and services

- Abnormal behavior across workflows

- Failures or unexpected outputs

Many engineering teams integrate these signals with existing monitoring tools used for production infrastructure.

A good example is the MyExec AI Business Consultant platform developed by Appinventiv. The system reviews enterprise documents and turns them into clear business insights through an AI interface. To keep decisions transparent, the platform logs each action, tracks recommendations, and restricts data access through identity permissions. This allows teams to understand how the system reaches conclusions.

Policy-Driven AI Development

Clear policies guide how teams build and deploy AI systems. These policies define what is acceptable and what requires additional oversight.

Common governance policies include:

- Approval workflows for high-impact systems

- Documentation standards for AI models and agents

- Regular review of production AI systems

Policy-driven development helps enterprises maintain consistent governance across engineering teams and business units.

Key Considerations for Scaling and Controlling Autonomous AI Governance

Enterprises must balance control with scalability when governing autonomous AI systems in production environments.

- Risk-tiered governance: Apply stricter controls to high-impact systems handling financial, healthcare, or customer data.

- Human override mechanisms: Ensure critical decisions can be paused, reviewed, or reversed when needed.

- Policy enforcement at runtime: Validate every agent action against predefined rules before execution.

- Cross-functional alignment: Align engineering, risk, legal, and compliance teams under shared governance standards.

- Scalable infrastructure: Design governance systems that support increasing agent volume without slowing innovation.

These considerations help enterprises maintain control while allowing autonomous AI systems to scale safely across operations

How Appinventiv Helps Enterprises Implement AI Governance Frameworks

Appinventiv works with enterprises that need to place autonomous AI systems into real production environments without losing control of risk, data access, or system behavior. Through AI governance consulting services, our teams help organizations design governance policies, define operational controls, and integrate monitoring infrastructure that supports an agentic AI governance framework for supervising how autonomous agents interact with enterprise systems.

Our teams include more than 200 data scientists and AI engineers who design, train, and operate AI systems across large-scale infrastructure. Over the past few years, we have deployed 100-plus autonomous AI agents and trained 150 custom AI models across 35 industries, including finance, healthcare, retail, logistics, and mobility.

Clients rely on us to translate governance policies into working systems. Engineering teams build control layers around agents so each action remains visible and traceable. Identity permissions restrict system access. Monitoring pipelines capture prompts, model outputs, and API calls. These records allow enterprises to review how agents behave during real operations.

Independent organizations have recognized this growth. Appinventiv appeared in the Deloitte Fast 50 India list for two consecutive years and ranked among APAC’s High Growth Companies by Statista and the Financial Times.

The results show up in operations. Many clients report a 50 percent reduction in manual work, agent task accuracy above 90 percent, and a two times improvement in operational scale once autonomous systems move into production with proper governance controls.

FAQs

Q. What is an agentic AI governance framework?

A. An agentic AI governance framework defines how enterprises control autonomous AI agents that act inside real business systems. It sets rules for system access, decision accountability, and operational oversight. The framework combines technical controls, monitoring tools, and policy oversight so organizations can supervise how agents interact with data, infrastructure, and workflows.

Q. How do enterprises govern autonomous AI systems?

A. Enterprises govern autonomous AI systems through layered controls across policy, engineering, and monitoring infrastructure. Governance programs define who can deploy AI systems, what actions agents can perform, and how systems behave in production. Teams use identity controls, approval workflows, runtime monitoring, and audit logs to supervise autonomous agents.

Q. What risks do agentic AI systems pose?

A. Agentic AI systems can introduce operational, security, and compliance risks if organizations lack strong governance. Autonomous agents may access sensitive systems, trigger transactions, or modify records without human review. Poor visibility into these actions makes incidents difficult to investigate. Governance programs reduce this exposure through monitoring, access control, and decision tracking.

Q. How can organizations ensure responsible AI governance?

A. Organizations support responsible AI governance by embedding oversight into system design and operations. Teams define access permissions, maintain detailed decision logs, and monitor agent behavior during production use. Many firms also create governance committees that review high-impact systems before deployment and supervise ongoing system performance.

Q. What frameworks exist for AI governance in enterprises?

A. Many enterprises rely on structured governance standards when building AI oversight programs. Common references include the NIST AI Risk Management Framework, ISO AI governance standards, and internal enterprise governance policies. These frameworks guide risk evaluation, system documentation, monitoring practices, and accountability structures for AI deployments.

Q. What are the key types and components of agentic AI systems?

A. Agentic AI systems consist of AI agents that interpret goals, reason through tasks, and execute actions across enterprise systems. These agents support autonomous decision-making through capabilities such as goal interpretation, reasoning engines, memory, and orchestration that coordinate multi-step workflows across applications and services. Integration layers, like an agent connector, allow agents to access APIs and enterprise tools.

Governance controls such as agent IAM, agent identity and scope definition, and agent permissions regulate system access and restrict what actions each agent can perform. Governance models like the KPMG TACO framework help enterprises supervise trust, accountability, control, and oversight for autonomous AI systems.

Q. How does Appinventiv help implement AI governance frameworks?

A. Appinventiv helps enterprises design and deploy governance controls for production AI systems. Engineering teams build monitoring pipelines, identity permissions, and policy enforcement layers that regulate agent behavior. The company also supports model deployment, infrastructure integration, and operational monitoring so organizations maintain visibility and control across autonomous systems.