World Models (WMs) are a central framework for developing agents that reason and plan in a compact latent space. However, training these models directly from pixel data often leads to ‘representation collapse,’ where the model produces redundant embeddings to trivially satisfy prediction objectives. Current approaches attempt to prevent this by relying on complex heuristics: they utilize stop-gradient updates, exponential moving averages (EMA), and frozen pre-trained encoders. A team of researchers including Yann LeCun and many others (Mila & Université de Montréal, New York University, Samsung SAIL and Brown University) introduced LeWorldModel (LeWM), the first JEPA (Joint-Embedding Predictive Architecture) that trains stably end-to-end from raw pixels using only two loss terms: a next-embedding prediction loss and a regularizer enforcing Gaussian-distributed latent embeddings

Technical Architecture and Objective

LeWM consists of two primary components learned jointly: an Encoder and a Predictor.

- Encoder ((zt=encθ (ot)): Maps a raw pixel observation into a compact, low-dimensional latent representation. The implementation uses a ViT-Tiny architecture (~5M parameters).

- Predictor (Žt+1=predθ(zt, at)): A transformer (~10M parameters) that models environment dynamics by predicting future latent states conditioned on actions.

The model is optimized using a streamlined objective function consisting of only two loss terms:

$$\mathcal{L}_{LeWM} \triangleq \mathcal{L}_{pred} + \lambda SIGReg(Z)$$

The prediction loss (Lpred) computes the mean-squared error (MSE) between the predicted and actual consecutive embeddings. The SIGReg (Sketched-Isotropic-Gaussian Regularizer) is the anti-collapse term that enforces feature diversity.

As per the research paper, applying a dropout rate of 0.1 in the predictor and a specific projection step (1-layer MLP with Batch Normalization) after the encoder are critical for stability and downstream performance.

Efficiency via SIGReg and Sparse Tokenization

Assessing normality in high-dimensional latent spaces is a major scaling challenge. LeWM addresses this using SIGReg, which leverages the Cramér-Wold theorem: a multivariate distribution matches a target (isotropic Gaussian) if all its one-dimensional projections match that target.

SIGReg projects latent embeddings onto M random directions and applies the Epps-Pulley test statistic to each resulting one-dimensional projection. Because the regularization weight λ is the only effective hyperparameter to tune, researchers can optimize it using a bisection search with O(log n) complexity, a significant improvement over the polynomial-time search (O(n6)) required by previous models like PLDM.

Speed Benchmarks

In the reported setup, LeWM demonstrates high computational efficiency:

- Token Efficiency: LeWM encodes observations using ~200× fewer tokens than DINO-WM.

- Planning Speed: LeWM achieves planning up to 48× faster than DINO-WM (0.98s vs 47s per planning cycle).

Latent Space Properties and Physical Understanding

LeWM’s latent space supports probing of physical quantities and detection of physically implausible events.

Violation-of-Expectation (VoE)

Using a VoE framework, the model was evaluated on its ability to detect ‘surprise’. It assigned higher surprise to physical perturbations such as teleportation; visual perturbations produced weaker effects, and cube color changes in OGBench-Cube were not significant.

Emergent Path Straightening

LeWM exhibits Temporal Latent Path Straightening, where latent trajectories naturally become smoother and more linear over the course of training. Notably, LeWM achieves higher temporal straightness than PLDM despite having no explicit regularizer encouraging this behavior.

| Feature | LeWorldModel (LeWM) | PLDM | DINO-WM | Dreamer / TD-MPC |

| Training Paradigm | Stable End-to-End | End-to-End | Frozen Foundation Encoder | Task-Specific |

| Input Type | Raw Pixels | Raw Pixels | Pixels (DINOv2 features) | Rewards / Privileged State |

| Loss Terms | 2 (Prediction + SIGReg) | 7 (VICReg-based) | 1 (MSE on latents) | Multiple (Task-specific) |

| Tunable Hyperparams | 1 (Effective weight λ) | 6 | N/A (Fixed by pre-training) | Many (Task-dependent) |

| Planning Speed | Up to 48x Faster | Fast (Compact latents) | Slow (~50x slower than LeWM) | Varies (often slow generation) |

| Anti-Collapse | Provable (Gaussian prior) | Under-specified / Unstable | Bounded by pre-training | Heuristic (e.g., reconstruction) |

| Requirement | Task-Agnostic / Reward-Free | Task-Agnostic / Reward-Free | Frozen Pre-trained Encoder | Task Signals / Rewards |

Key Takeaways

- Stable End-to-End Learning: LeWM is the first Joint-Embedding Predictive Architecture (JEPA) that trains stably end-to-end from raw pixels without needing ‘hand-holding’ heuristics like stop-gradients, exponential moving averages (EMA), or frozen pre-trained encoders.

- A Radical Two-Term Objective: The training process is simplified into just two loss terms—a next-embedding prediction loss and the SIGReg regularizer—reducing the number of tunable hyperparameters from six to one compared to existing end-to-end alternatives.

- Built for Real-Time Speed: By representing observations with approximately 200× fewer tokens than foundation-model-based counterparts, LeWM plans up to 48× faster, completing full trajectory optimizations in under one second.

- Provable Anti-Collapse: To prevent the model from learning ‘garbage’ redundant representations, it uses the SIGReg regularizer; this utilizes the Cramér-Wold theorem to ensure high-dimensional latent embeddings stay diverse and Gaussian-distributed.

- Intrinsic Physical Logic: The model doesn’t just predict data; it captures meaningful physical structure in its latent space, allowing it to accurately probe physical quantities and detect ‘impossible’ events like object teleportation through a violation-of-expectation framework.

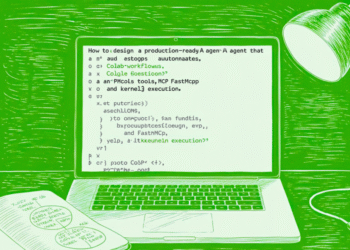

Check out the Paper, Website and Repo. Also, feel free to follow us on Twitter and don’t forget to join our 120k+ ML SubReddit and Subscribe to our Newsletter. Wait! are you on telegram? now you can join us on telegram as well.