Your email campaigns are barely getting opened, and clicks? Even worse. The problem might not be your offer or your audience, but rather the combination of elements you’re using in your emails.

Multivariate testing gives you the opportunity to test different combinations of elements in your emails to find what works best for your audience. In other words, instead of testing one element at a time, you can experiment with multiple variables simultaneously, like subject lines, sender names, and CTA buttons together, to discover the winning formula.

In this article, we’ll walk you through exactly how to use multivariate testing in email marketing to boost your open rates and clicks. Let’s get started!

What is multivariate email testing and why it matters

Understanding multivariate testing in email marketing

Multivariate testing in email marketing lets you test different combinations of elements in your emails to find what works best for your audience. Instead of testing individual email elements, multivariate testing helps you understand which details work well together by testing multiple elements simultaneously.

The process works by combining variations of each element to create multiple unique versions of your email. For instance, if you test 2 variables with 3 variations each, you’ll have a total of 9 different combinations to test. These variables might include subject lines, sender names, images, body text, CTA buttons, colors, layout, or any other elements that can be changed.

Each combination gets sent to segments of your subscriber list, and you track performance indicators like open rates and click-through rates. Advanced analytics tools determine which combination of variables led to the best performance according to your specific goal.

How multivariate testing differs from A/B testing

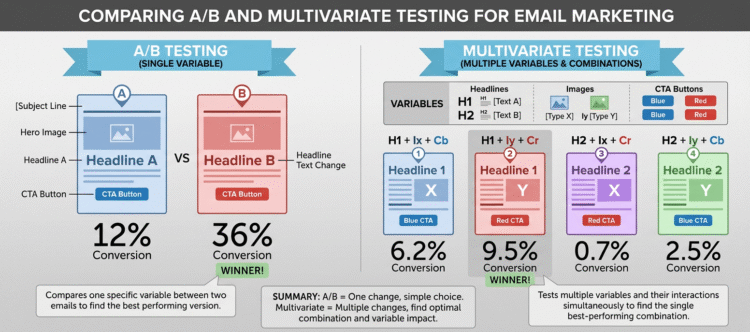

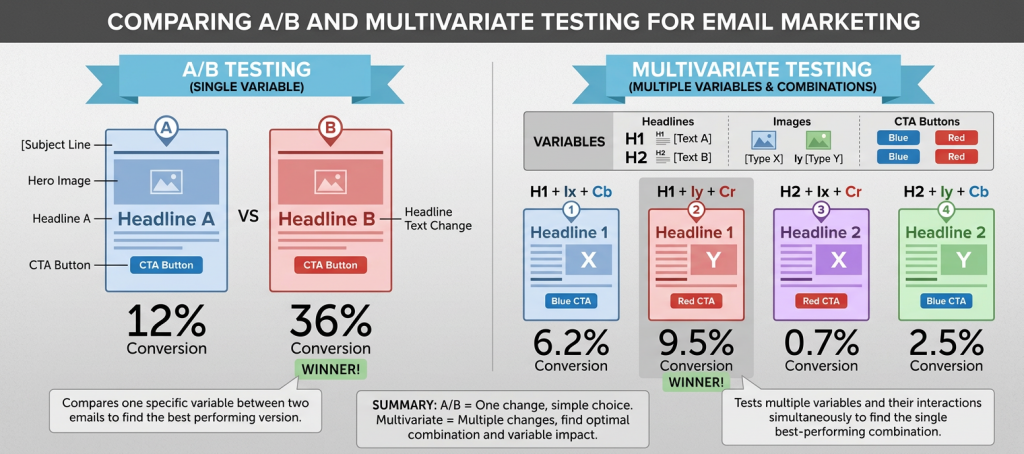

A/B testing compares two versions of a single variable against each other to determine which one performs better. For example, you might test two different subject lines while keeping everything else identical.

In contrast, multivariate testing evaluates multiple variables simultaneously to identify the best-performing combination. The traffic splits differently as well. In A/B testing, traffic is divided in half with 50% visiting each variation. In a multivariate test, traffic splits into quarters, sixths, eighths, or even smaller segments.

The main advantage of running a multivariate test rather than an A/B test is the ability to determine how various elements interact with one another. You can figure out not only that visual A performs better than visual B, and that button C performs better than button D, but you can also discover the best combination of these overall.

When to use multivariate testing vs A/B testing

A/B testing works best when you have limited traffic, need results quickly, or are making major design changes. Since A/B tests have fewer variations, you need far less traffic to reach statistical significance.

Multivariate testing requires significant traffic because you need enough data across all combinations to get reliable results. Use it when you have high-volume emails or pages, you’re refining an already solid experience, and you want to understand which elements and combinations have the biggest impact on conversion.

Common reasons your email open rates and click rates are low

Before you can fix your email performance with multivariate email testing, you need to understand what’s causing the problem. These five issues account for most low open rates and click-through rates.

Weak subject lines that don’t grab attention

Subject lines determine whether recipients open your emails. In fact, 43% of people open an email based solely on the subject line. On the flip side, 69% of recipients mark emails as spam based on the subject line alone. Generic phrases like “Newsletter” decrease open rates by 18.7%, while personalized subject lines increase open rates by at least 50%. Aim for concise subject lines (around 40–50 characters) and track your own campaigns to identify the length that drives the highest click-through rates for your audience.

Generic sender names that lack trust

The sender name serves as your first impression before the subject line even matters. Research shows 45% of email subscribers say the sender name is the primary reason they open an email. Generic sender names like “Info” or “No Reply” reduce engagement and make emails feel impersonal. Recipients need to recognize and trust who sent the message, or they’ll ignore it or mark it as spam.

Poor CTA placement and design

CTAs guide subscribers toward your desired action, but weak language like “Click here” or “Learn more” fails to communicate value. Keep CTAs between 2-5 words maximum. Emails with a single CTA generate 371% more clicks than those with multiple CTAs. Switching from hyperlinked text to a button increased click-through rates by 127% in Campaign Monitor’s testing.

Misaligned email content with audience preferences

Sending the right message to the wrong audience kills engagement. Data shows 71% of people ignore emails that don’t address a relevant problem. When email content doesn’t match subscriber interests or expectations, unsubscribe rates climb and engagement drops.

Wrong send times for your audience

Timing affects whether your emails get seen or buried. Sending emails based only on your local time ignores where subscribers actually live. Almost 22% of email campaigns are opened in the first hour after sending, and open likelihood decreases each hour after.

How to run multivariate email tests to boost performance

Running multivariate email testing strategies requires a structured approach. Here’s how to execute tests that deliver actionable insights.

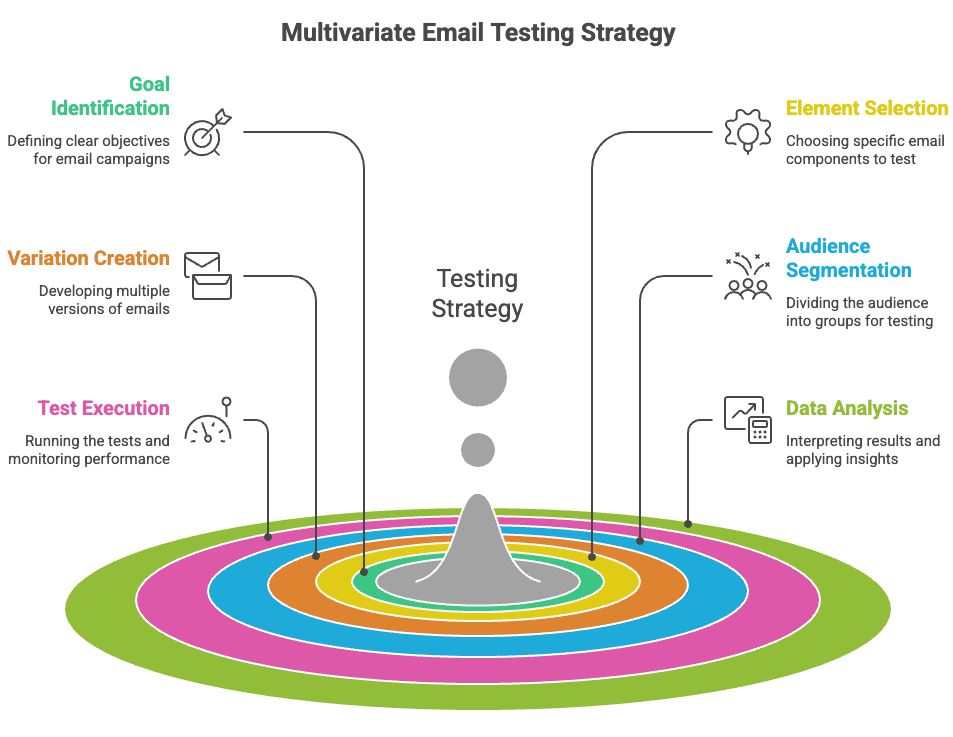

Step 1: Identify your testing goal and metrics

Define what you want to achieve before testing. Are you optimizing for higher open rates? Test subject lines and sender names. Want more clicks? Focus on CTA buttons, email layout, and copy variations. Better conversion rates require testing offers, personalization, and imagery. Select one metric as your winning criteria: open rate, click rate, or conversion rate.

Step 2: Choose which email elements to test

Multivariate testing email campaigns allows multiple changes simultaneously. Test subject lines to see if personalization beats curiosity-driven lines. Experiment with sender names to determine whether emails should come from a person or a brand. Evaluate CTA placement and design, comparing buttons versus text links. Test email copy length and decide between image-heavy versus minimal image designs.

Step 3: Create your test variations

Create up to 3 variations per variable. If testing 2 variables with 3 variations each, you’ll generate 6 combinations. For example, testing 2 CTA texts and 2 positions creates 4 email versions. Keep the content identical except for the variables being tested.

Step 4: Segment your audience for testing

Send test combinations to 10-20% of your email list for statistically significant results. Tests require at least 5,000 subscribers per combination for useful data. Divide your audience into measurable segments to track how different groups respond.

Step 5: Run your test and monitor the results

Allow 4-24 hours for meaningful results, depending on audience size. Wait at least one day for data to accumulate. Monitor open rates, click-through rates, and conversion rates throughout the test period.

Step 6: Analyze data and apply winning combinations

Compare performance across all combinations after the test completes. In many email platforms, once a clear winner emerges, you can automatically or manually send the best-performing version to the remaining recipients. Document findings and apply insights to future campaigns.

Choosing the right multivariate testing tool ensures accurate results, efficient experimentation, and stronger campaign performance. Focus on platforms that simplify testing while delivering reliable insights.

- Support for A/B and A/B/n experiments

Look for tools that support multiple variations at once and help you quickly identify the top performers using real-time data, with the option to route more traffic to winning versions. - Real-time analytics

Access to live performance metrics allows you to monitor results, make faster decisions, and optimize campaigns efficiently. - Audience segmentation

Strong segmentation features help you test variations on specific user groups, producing clearer and more actionable insights. - Visual editor

A drag-and-drop editor simplifies test creation, reduces technical dependency, and speeds up execution. - Preview and deliverability testing

Cross-device previews and built-in spam checks help prevent rendering issues and improve inbox placement before sending.

How to avoid common multivariate testing mistakes

Multivariate testing is powerful, but poor execution can lead to misleading results. Avoid these common pitfalls to maintain data accuracy and maximize impact.

- Testing too many variables

Overloading a test makes it difficult to identify which changes drove the results. Focus on a manageable number of meaningful elements. - Stopping tests too early

Ending experiments prematurely leads to unreliable conclusions. Wait until statistical significance is reached. - Changing conditions mid-test

Adjusting variables, traffic distribution, or targeting during an active test invalidates results. Keep conditions consistent. - Lack of a clear hypothesis and documentation

Always define what you’re testing and why, and document results to build long-term optimization knowledge.

Boost your email performance with data-driven testing

You now have everything you need to turn low open rates and click-throughs around with multivariate email testing. Start by defining a clear goal for each campaign, experiment with the right combinations of subject lines, visuals, CTAs, and other key elements, and let the data guide your decisions. Every insight you gather brings you closer to emails that truly resonate with your audience.

Equally important is the platform you use. Tools like Insider One simplify experimentation with built-in A/B and A/B/n testing, making it easier to compare variations and act on winning versions with far less manual effort. This means less time guessing and more time seeing measurable results.

Don’t wait, launch your first test this week. Monitor the results, apply what works, and watch your email performance climb steadily. With the right strategy, the emails your subscribers receive will be the ones they can’t wait to open.

FAQs

Focus on crafting benefit-driven CTAs instead of generic phrases like “Click here.” Use specific language such as “Get your free checklist” or “See how it works.” Additionally, optimize your subject lines, personalize content, segment your audience strategically, and ensure your CTA buttons stand out visually with contrasting colors and clear placement.

A/B testing compares two versions of a single variable, splitting traffic in half between them. Multivariate testing evaluates multiple variables simultaneously to find the best-performing combination, splitting traffic into smaller segments. While A/B testing is ideal for testing one element at a time, multivariate testing reveals how different elements interact with each other to drive results.

Use multivariate testing when you have significant email traffic (at least 5,000 subscribers per combination), want to make incremental improvements to an already optimized campaign, or need to understand which combination of elements works best together. If you have limited traffic or need quick results, stick with A/B testing.

To improve open rates, test subject lines and sender names. For better click rates, focus on CTA button design and placement, email copy length, and the balance between images and text. You can also test personalisation elements, email layouts, and send timing to find what resonates best with your audience.

Allow at least 4-24 hours for meaningful results, depending on your audience size. Wait at least 1 day for sufficient data to accumulate before concluding. Stopping tests prematurely leads to decisions based on incomplete data, so let your test run its full course to ensure statistical significance.