Al, Analytics and Automation

Liquid AI’s LFM2-2.6B-Exp Uses Pure Reinforcement Learning RL And Dynamic Hybrid Reasoning To Tighten Small Model Behavior

Liquid AI has introduced LFM2-2.6B-Exp, an experimental checkpoint of its LFM2-2.6B language model that is trained with pure reinforcement learning...

Training a Model with Limited Memory using Mixed Precision and Gradient Checkpointing

Training a language model is memory-intensive, not only because the model itself is large but also because the long sequences...

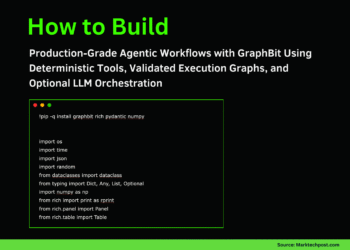

How to Build Production-Grade Agentic Workflows with GraphBit Using Deterministic Tools, Validated Execution Graphs, and Optional LLM Orchestration

In this tutorial, we build an end-to-end, production-style agentic workflow using GraphBit that demonstrates how graph-structured execution, tool calling, and...

Train a Model Faster with torch.compile and Gradient Accumulation

Training a language model with a deep transformer architecture is time-consuming. However, there are techniques you can use to accelerate...

DarLink Chatbot Access, Pricing, and Feature Overview

Engaging with the AI models in DarLink creates the impression of a flowing dialogue instead of a rigid input-output process,...

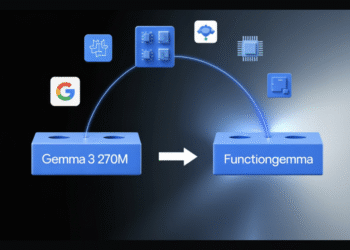

From Gemma 3 270M to FunctionGemma, How Google AI Built a Compact Function Calling Specialist for Edge Workloads

Google has released FunctionGemma, a specialized version of the Gemma 3 270M model that is trained specifically for function calling...

Training a Model on Multiple GPUs with Data Parallelism

import dataclassesimport os import datasetsimport tqdmimport tokenizersimport torchimport torch.distributed as distimport torch.nn as nnimport torch.nn.functional as Fimport torch.optim.lr_scheduler as lr_schedulerfrom torch...

Pricing Structure and Key Features

DarLink is intended for users who treat AI image generation as an individual process of exploration rather than a structured...

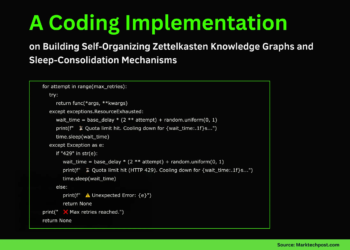

A Coding Implementation on Building Self-Organizing Zettelkasten Knowledge Graphs and Sleep-Consolidation Mechanisms

In this tutorial, we dive into the cutting edge of Agentic AI by building a “Zettelkasten” memory system, a “living”...

This AI Paper from Stanford and Harvard Explains Why Most ‘Agentic AI’ Systems Feel Impressive in Demos and then Completely Fall Apart in Real Use

Agentic AI systems sit on top of large language models and connect to tools, memory, and external environments. They already...