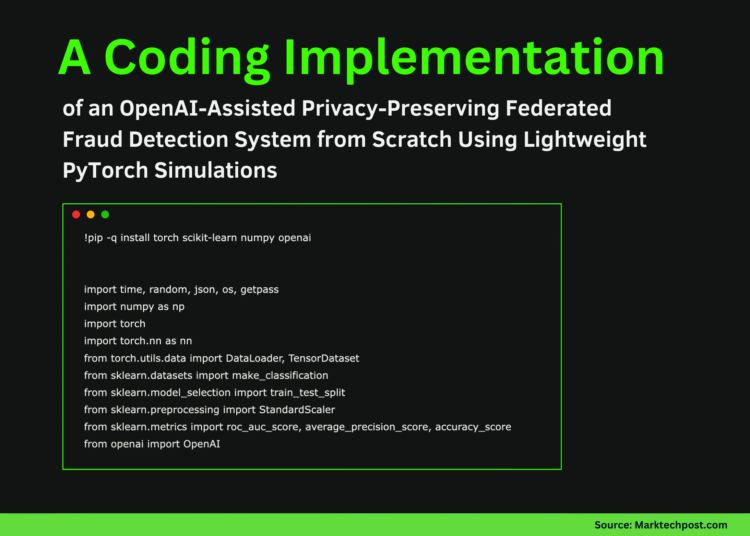

In this tutorial, we demonstrate how we simulate a privacy-preserving fraud detection system using Federated Learning without relying on heavyweight frameworks or complex infrastructure. We build a clean, CPU-friendly setup that mimics ten independent banks, each training a local fraud-detection model on its own highly imbalanced transaction data. We coordinate these local updates through a simple FedAvg aggregation loop, allowing us to improve a global model while ensuring that no raw transaction data ever leaves a client. Alongside this, we integrate OpenAI to support post-training analysis and risk-oriented reporting, demonstrating how federated learning outputs can be translated into decision-ready insights. Check out the Full Codes here.

!pip -q install torch scikit-learn numpy openai

import time, random, json, os, getpass

import numpy as np

import torch

import torch.nn as nn

from torch.utils.data import DataLoader, TensorDataset

from sklearn.datasets import make_classification

from sklearn.model_selection import train_test_split

from sklearn.preprocessing import StandardScaler

from sklearn.metrics import roc_auc_score, average_precision_score, accuracy_score

from openai import OpenAI

SEED = 7

random.seed(SEED); np.random.seed(SEED); torch.manual_seed(SEED)

DEVICE = torch.device("cpu")

print("Device:", DEVICE)We set up the execution environment and import all required libraries for data generation, modeling, evaluation, and reporting. We also fix random seeds and the device configuration to ensure our federated simulation remains deterministic and reproducible on CPU. Check out the Full Codes here.

X, y = make_classification(

n_samples=60000,

n_features=30,

n_informative=18,

n_redundant=8,

weights=[0.985, 0.015],

class_sep=1.5,

flip_y=0.01,

random_state=SEED

)

X = X.astype(np.float32)

y = y.astype(np.int64)

X_train_full, X_test, y_train_full, y_test = train_test_split(

X, y, test_size=0.2, stratify=y, random_state=SEED

)

server_scaler = StandardScaler()

X_train_full_s = server_scaler.fit_transform(X_train_full).astype(np.float32)

X_test_s = server_scaler.transform(X_test).astype(np.float32)

test_loader = DataLoader(

TensorDataset(torch.from_numpy(X_test_s), torch.from_numpy(y_test)),

batch_size=1024,

shuffle=False

)

We generate a highly imbalanced, credit-card-like fraud dataset & split it into training & test sets. We standardize the server-side data and prepare a global test loader that allows us to consistently evaluate the aggregated model after each federated round. Check out the Full Codes here.

def dirichlet_partition(y, n_clients=10, alpha=0.35):

classes = np.unique(y)

idx_by_class = [np.where(y == c)[0] for c in classes]

client_idxs = [[] for _ in range(n_clients)]

for idxs in idx_by_class:

np.random.shuffle(idxs)

props = np.random.dirichlet(alpha * np.ones(n_clients))

cuts = (np.cumsum(props) * len(idxs)).astype(int)

prev = 0

for cid, cut in enumerate(cuts):

client_idxs[cid].extend(idxs[prev:cut].tolist())

prev = cut

return [np.array(ci, dtype=np.int64) for ci in client_idxs]

NUM_CLIENTS = 10

client_idxs = dirichlet_partition(y_train_full, NUM_CLIENTS, 0.35)

def make_client_split(X, y, idxs):

Xi, yi = X[idxs], y[idxs]

if len(np.unique(yi)) < 2:

other = np.where(y == (1 - yi[0]))[0]

add = np.random.choice(other, size=min(10, len(other)), replace=False)

Xi = np.concatenate([Xi, X[add]])

yi = np.concatenate([yi, y[add]])

return train_test_split(Xi, yi, test_size=0.15, stratify=yi, random_state=SEED)

client_data = [make_client_split(X_train_full, y_train_full, client_idxs[c]) for c in range(NUM_CLIENTS)]

def make_client_loaders(Xtr, ytr, Xva, yva):

sc = StandardScaler()

Xtr_s = sc.fit_transform(Xtr).astype(np.float32)

Xva_s = sc.transform(Xva).astype(np.float32)

tr = DataLoader(TensorDataset(torch.from_numpy(Xtr_s), torch.from_numpy(ytr)), batch_size=512, shuffle=True)

va = DataLoader(TensorDataset(torch.from_numpy(Xva_s), torch.from_numpy(yva)), batch_size=512)

return tr, va

client_loaders = [make_client_loaders(*cd) for cd in client_data]We simulate realistic non-IID behavior by partitioning the training data across ten clients using a Dirichlet distribution. We then create independent client-level train and validation loaders, ensuring that each simulated bank operates on its own locally scaled data. Check out the Full Codes here.

class FraudNet(nn.Module):

def __init__(self, in_dim):

super().__init__()

self.net = nn.Sequential(

nn.Linear(in_dim, 64),

nn.ReLU(),

nn.Dropout(0.1),

nn.Linear(64, 32),

nn.ReLU(),

nn.Dropout(0.1),

nn.Linear(32, 1)

)

def forward(self, x):

return self.net(x).squeeze(-1)

def get_weights(model):

return [p.detach().cpu().numpy() for p in model.state_dict().values()]

def set_weights(model, weights):

keys = list(model.state_dict().keys())

model.load_state_dict({k: torch.tensor(w) for k, w in zip(keys, weights)}, strict=True)

@torch.no_grad()

def evaluate(model, loader):

model.eval()

bce = nn.BCEWithLogitsLoss()

ys, ps, losses = [], [], []

for xb, yb in loader:

logits = model(xb)

losses.append(bce(logits, yb.float()).item())

ys.append(yb.numpy())

ps.append(torch.sigmoid(logits).numpy())

y_true = np.concatenate(ys)

y_prob = np.concatenate(ps)

return {

"loss": float(np.mean(losses)),

"auc": roc_auc_score(y_true, y_prob),

"ap": average_precision_score(y_true, y_prob),

"acc": accuracy_score(y_true, (y_prob >= 0.5).astype(int))

}

def train_local(model, loader, lr):

opt = torch.optim.Adam(model.parameters(), lr=lr)

bce = nn.BCEWithLogitsLoss()

model.train()

for xb, yb in loader:

opt.zero_grad()

loss = bce(model(xb), yb.float())

loss.backward()

opt.step()We define the neural network used for fraud detection along with utility functions for training, evaluation, and weight exchange. We implement lightweight local optimization and metric computation to keep client-side updates efficient and easy to reason about. Check out the Full Codes here.

def fedavg(weights, sizes):

total = sum(sizes)

return [

sum(w[i] * (s / total) for w, s in zip(weights, sizes))

for i in range(len(weights[0]))

]

ROUNDS = 10

LR = 5e-4

global_model = FraudNet(X_train_full.shape[1])

global_weights = get_weights(global_model)

for r in range(1, ROUNDS + 1):

client_weights, client_sizes = [], []

for cid in range(NUM_CLIENTS):

local = FraudNet(X_train_full.shape[1])

set_weights(local, global_weights)

train_local(local, client_loaders[cid][0], LR)

client_weights.append(get_weights(local))

client_sizes.append(len(client_loaders[cid][0].dataset))

global_weights = fedavg(client_weights, client_sizes)

set_weights(global_model, global_weights)

metrics = evaluate(global_model, test_loader)

print(f"Round {r}: {metrics}")We orchestrate the federated learning process by iteratively training local client models and aggregating their parameters using FedAvg. We evaluate the global model after each round to monitor convergence and understand how collective learning improves fraud detection performance. Check out the Full Codes here.

OPENAI_API_KEY = getpass.getpass("Enter OPENAI_API_KEY (input hidden): ").strip()

if OPENAI_API_KEY:

os.environ["OPENAI_API_KEY"] = OPENAI_API_KEY

client = OpenAI()

summary = {

"rounds": ROUNDS,

"num_clients": NUM_CLIENTS,

"final_metrics": metrics,

"client_sizes": [len(client_loaders[c][0].dataset) for c in range(NUM_CLIENTS)],

"client_fraud_rates": [float(client_data[c][1].mean()) for c in range(NUM_CLIENTS)]

}

prompt = (

"Write a concise internal fraud-risk report.\n"

"Include executive summary, metric interpretation, risks, and next steps.\n\n"

+ json.dumps(summary, indent=2)

)

resp = client.responses.create(model="gpt-5.2", input=prompt)

print(resp.output_text)We transform the technical results into a concise analytical report using an external language model. We securely accept the API key via keyboard input and generate decision-oriented insights that summarize performance, risks, and recommended next steps.

In conclusion, we showed how to implement federated learning from first principles in a Colab notebook while remaining stable, interpretable, and realistic. We observed how extreme data heterogeneity across clients influences convergence and why careful aggregation and evaluation are critical in fraud-detection settings. We also extended the workflow by generating an automated risk-team report, demonstrating how analytical results can be translated into decision-ready insights. At last, we presented a practical blueprint for experimenting with federated fraud models that emphasizes privacy awareness, simplicity, and real-world relevance.

Check out the Full Codes here. Also, feel free to follow us on Twitter and don’t forget to join our 100k+ ML SubReddit and Subscribe to our Newsletter. Wait! are you on telegram? now you can join us on telegram as well.

Asif Razzaq is the CEO of Marktechpost Media Inc.. As a visionary entrepreneur and engineer, Asif is committed to harnessing the potential of Artificial Intelligence for social good. His most recent endeavor is the launch of an Artificial Intelligence Media Platform, Marktechpost, which stands out for its in-depth coverage of machine learning and deep learning news that is both technically sound and easily understandable by a wide audience. The platform boasts of over 2 million monthly views, illustrating its popularity among audiences.