March 20, 2026

4 min read

By Cogito Tech.

680 views

The rapid adoption of AI in medicine has introduced not only new technical possibilities but also heightened scrutiny from regulatory bodies responsible for clinical safety and effectiveness. Oversight from institutions such as the FDA and European authorities operating under the Medical Device Regulation (MDR) framework has transformed compliance into a structured, evidence-driven process rather than a late-stage formality.

Regulatory approval is no longer just a technical checkpoint on the device roadmap; it is a defining moment that determines whether innovation can translate into real clinical impact.

Why strong models still fail at validation

Launching an AI-enabled Software as a Medical Device (SaMD) means proving to regulators that your system is not only accurate but safe, reliable, and clinically meaningful within its intended use.

However, even strong algorithms don’t cut it at the regulatory phase, only to discover that their training and validation datasets lack the documentation depth, demographic representativeness, traceability, and compliance infrastructure required to withstand regulatory examination.

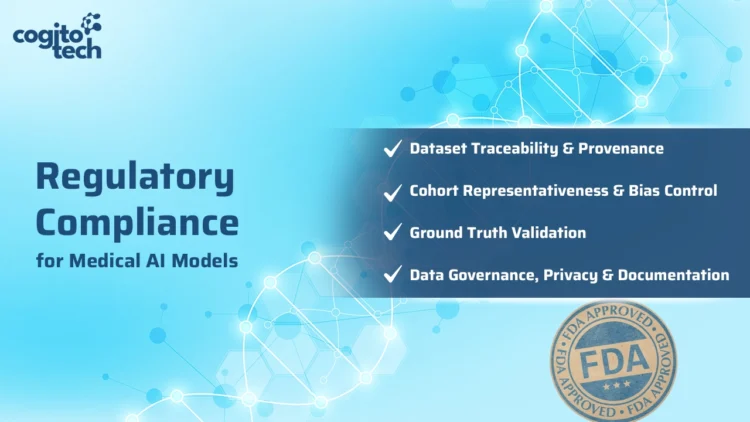

At Cogito Tech, our board-certified multidisciplinary team supports AI developers with compliant, traceable, and medically validated annotated datasets aligned with FDA, HIPAA, and global regulatory expectations across healthcare settings.

Key data-centric challenges in regulatory submissions

Below are the core data-related challenges faced even by advanced AI projects – and how Cogito Tech’s Medical Innovation Hub addresses them.

Audit-ready data and annotation infrastructure

Regulators treat dataset readiness as a primary submission artifact. They require end-to-end traceability and data provenance, including digital audit trails showing:

- Who annotated each data point

- When changes were made

- How dataset versions were controlled

Ad hoc tools, spreadsheets, or loosely managed pipelines rarely meet standards such as 21 CFR Part 11 requirements for electronic records and audit trails.

Transparent cohort design and fairness documentation

Regulators are moving beyond strong performance metrics to demand transparent cohort design and documented fairness evidence. Teams must clearly define validation cohorts, including inclusion and exclusion criteria. Legacy or loosely sourced datasets rarely meet this standard.

Often a large number of training datasets don’t contain essential demographic metadata (such as age, sex, or race/ethnicity), limiting the ability to assess bias or clinical generalizability. Public analyses of cleared AI/ML-enabled devices have shown persistent reporting gaps in demographic transparency, increasing regulatory focus on demographic inclusion.

Governing drift in evolving AI systems

Model drift, caused by shifts in real-world operational data, can erode model performance over time, raising safety concerns if ongoing monitoring, performance auditing, and retraining documentation are not rigorously maintained. Additionally, retraining models on new datasets frequently triggers mandatory re-validation and regulatory re-submission under current frameworks – a requirement many AI labs fail to anticipate during early development.

Compounding the issue, guidance documents such as those from the Medical Device Coordination Group (MDCG frameworks) provide evolving but still limited pathways for fully autonomous or continuously learning AI systems. As a result, significant model updates are often treated as controlled new releases.

The core challenge lies in establishing robust lifecycle governance that remains continuously aligned with regulatory expectations.

Data quality, explainability, and interoperability as regulatory gatekeepers

Poor data quality is one of the most significant regulatory barriers for AI systems. Regulators require documented evidence of:

- Accuracy and completeness

- Representativeness

- Bias mitigation

- Clinical relevance

- Traceable data provenance

Weak documentation, inconsistent labeling, and fragmented data formats increase scrutiny and undermine submission defensibility.

AI systems must therefore embed strong data governance, lineage tracking, interoperability standards, and transparent documentation into the training data pipeline from the outset.

How Cogito Tech turns regulatory complexity into competitive advantage

Validated, 21 CFR Part 11–aligned data Governance and traceability

Through the DataSum framework, our proprietary “Nutrition Facts”-style framework, Cogito Tech provides structured, transparent documentation of dataset quality, composition, and governance.

The framework aligns with requirements such as 21 CFR Part 11 for electronic records and audit trails. HIPAA-compliant, FDA-ready workflows replace ad hoc processes with controlled, review-aligned infrastructure.

By managing the full lifecycle – from pre-labeling and quality control to auditing and version tracking – we ensure end-to-end traceability, clear data provenance, and defensible submission readiness.

Documented cohort representativeness and defensible fairness validation

Through its global network of multidisciplinary medical experts, Cogito Tech benchmarks and validates datasets across specialties and geographies.

This strengthens cohort credibility across varied clinical settings and patient populations while enabling:

- Transparent demographic representation

- Structured inclusion/exclusion documentation

- Defensible fairness validation

- Alignment with evolving regulatory expectations

Controlled lifecycle governance and change management

Cogito Tech mitigates model drift and regulatory risk through FDA-ready workflows and CFR 21 Part 11–compliant processes that ensure structured documentation, traceability, and audit readiness across the AI lifecycle.

Our Innovation Hub supports:

- Continuous dataset monitoring

- Controlled retraining documentation

- Version tracking and benchmarking

- Structured re-validation support

This infrastructure simplifies change management, reduces re-submission friction, and ensures that AI systems remain performance-stable and compliant as they evolve.

Traceable, standards-aligned data integrity and interoperability

DataSum strengthens provenance documentation and lineage tracking to support regulatory submissions, including FDA 510(k) pathways where applicable.

End-to-end workflows – spanning acquisition, curation, annotation, validation, and auditing – ensure accuracy, completeness, and demographic representativeness across modalities.

Support for formats such as NRRD, NIfTI, DICOM, and multimodal clinical datasets enhances interoperability and submission readiness.

Together, these capabilities embed structured governance, bias control, and traceable documentation directly into the training data pipeline – aligning AI development with regulatory expectations from the start.

Scalable, bias-controlled data creation and external validation

Leveraging a large pool of medical annotators, Cogito Tech scales training data creation, labeling, and QA services while integrating regulatory safeguards against sampling bias, spectrum bias, and demographic under-representation. Through multi-center, multi-geography cohort sourcing and expert-led validation, we ensure datasets reflect real-world clinical diversity and intended-use populations.

Our approach enables:

- Diverse, multi-center cohort development

- Demographic balance across patient subgroups

- Sampling and spectrum bias mitigation

- Independent external validation across healthcare institutions, regions, and timeframes

- Alignment with FDA/ EU standards for generalizability and fairness

Step-by-step guide to preparing AI models for FDA and MDR submission

AI developers need to take the following steps when building AI, ML, or CV models for healthcare organizations and MedTech companies that require FDA approval for model deployment:

- Collect or create HIPAA- and FDA-compliant multimodal medical datasets.

- Meticulously label the data, as label accuracy is far more critical in healthcare than in other industries.

- Integrate medical expert review into the data pipeline for quality control and validation.

- Embed a clear and robust FDA-level audit trail.

- Test the models and refine the data to improve performance

Conclusion

Regulatory approval for AI-enabled medical systems is no longer achieved through model performance alone. It demands structured governance, defensible data quality, transparent cohort design, and continuous lifecycle documentation aligned with frameworks such as the FDA and the MDR.

Cogito Tech embeds compliance directly into the data lifecycle, transforming training and validation datasets into audit-ready regulatory assets. Through 21 CFR Part 11–aligned traceability, clinically validated annotation pipelines, expert-led cohort governance, and continuously maintained documentation, we reduce submission risk and strengthen technical files for FDA 510(k), De Novo, and MDR pathways.

For AI innovators in healthcare and MedTech, regulatory readiness should not be a late-stage correction. With Cogito Tech, it becomes a built-in competitive advantage from day one.