10 Python One-Liners for Calculating Model Feature Importance

Image by Editor

Understanding machine learning models is a vital aspect of building trustworthy AI systems. The understandability of such models rests on two basic properties: explainability and interpretability. The former refers to how well we can describe a model’s “innards” (i.e. how it operates and looks internally), while the latter concerns how easily humans can understand the captured relationships between input features and predicted outputs. As we can see, the difference between them is subtle, but there is a powerful bridge connecting both: feature importance.

This article unveils 10 simple but effective Python one-liners to calculate model feature importance from different perspectives — helping you understand not only how your machine learning model behaves, but also why it made the prediction(s) it did.

1. Built-in Feature Importance in Decision Tree-based Models

Tree-based models like random forests and XGBoost ensembles allow you to easily obtain a list of feature-importance weights using an attribute like:

|

importances = model.feature_importances_ |

Note that model should contain a trained model a priori. The result is an array containing the importance of features, but if you want a more self-explanatory version, this code enhances the previous one-liner by incorporating the feature names for a dataset like iris, all in one line.

|

print(“Feature importances:”, list(zip(iris.feature_names, model.feature_importances_))) |

2. Coefficients in Linear Models

Simpler linear models like linear regression and logistic regression also expose feature weights via learned coefficients. This is a way to obtain the first of them directly and neatly (remove the positional index to obtain all weights):

|

importances = abs(model.coef_[0]) |

3. Sorting Features by Importance

Similar to the enhanced version of number 1 above, this useful one-liner can be used to rank features by their importance values in descending order: an excellent glimpse of which features are the strongest or most influential contributors to model predictions.

|

sorted_features = sorted(zip(features, importances), key=lambda x: x[1], reverse=True) |

4. Model-Agnostic Permutation Importance

Permutation importance is an additional approach to measure a feature’s importance — namely, by shuffling its values and analyzing how a metric used to measure the model’s performance (e.g. accuracy or error) decreases. Accordingly, this model-agnostic one-liner from scikit-learn is used to measure performance drops as a result of randomly shuffling a feature’s values.

|

from sklearn.inspection import permutation_importance result = permutation_importance(model, X, y).importances_mean |

5. Mean Loss of Accuracy in Cross-Validation Permutations

This is an efficient one-liner to test permutations in the context of cross-validation processes — analyzing how shuffling each feature impacts model performance across K folds.

|

import numpy as np from sklearn.model_selection import cross_val_score importances = [(cross_val_score(model, X.assign(**{f: np.random.permutation(X[f])}), y).mean()) for f in X.columns] |

6. Permutation Importance Visualizations with Eli5

Eli5 — an abbreviated form of “Explain like I’m 5 (years old)” — is, in the context of Python machine learning, a library for crystal-clear explainability. It provides a mildly visually interactive HTML view of feature importances, making it particularly handy for notebooks and suitable for trained linear or tree models alike.

|

import eli5 eli5.show_weights(model, feature_names=features) |

7. Global SHAP Feature Importance

SHAP is a popular and powerful library to get deeper into explaining model feature importance. It can be used to calculate mean absolute SHAP values (feature-importance indicators in SHAP) for each feature — all under a model-agnostic, theoretically grounded measurement approach.

|

import numpy as np import shap shap_values = shap.TreeExplainer(model).shap_values(X) importances = np.abs(shap_values).mean(0) |

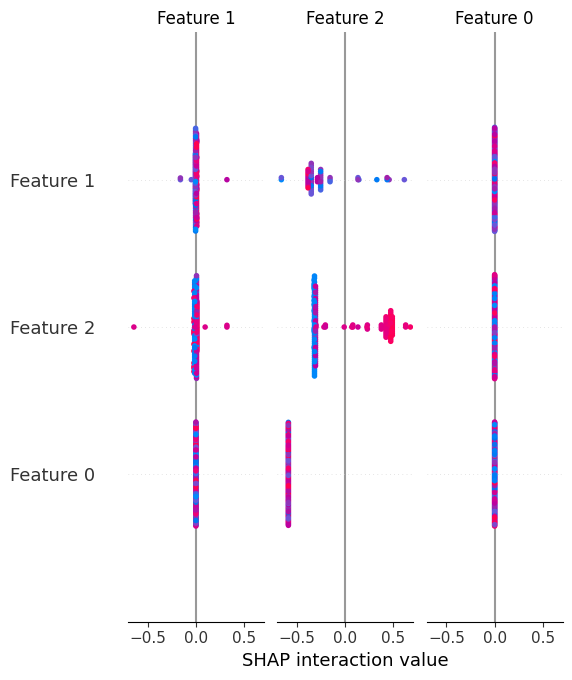

8. Summary Plot of SHAP Values

Unlike global SHAP feature importances, the summary plot provides not only the global importance of features in a model, but also their directions, visually helping understand how feature values push predictions upward or downward.

|

shap.summary_plot(shap_values, X) |

Let’s look at a visual example of result obtained:

9. Single-Prediction Explanations with SHAP

One particularly attractive aspect of SHAP is that it helps explain not only the overall model behavior and feature importances, but also how features specifically influence a single prediction. In other words, we can reveal or decompose an individual prediction, explaining how and why the model yielded that specific output.

|

shap.force_plot(shap.TreeExplainer(model).expected_value, shap_values[0], X.iloc[0]) |

10. Model-Agnostic Feature Importance with LIME

LIME is an alternative library to SHAP that generates local surrogate explanations. Rather than using one or the other, these two libraries complement each other well, helping better approximate feature importance around individual predictions. This example does so for a previously trained logistic regression model.

|

from lime.lime_tabular import LimeTabularExplainer exp = LimeTabularExplainer(X.values, feature_names=features).explain_instance(X.iloc[0], model.predict_proba) |

Wrapping Up

This article unveiled 10 effective Python one-liners to help better understand, explain, and interpret machine learning models with a focus on feature importance. Comprehending how your model works from the inside is no longer a mysterious black box with the aid of these tools.